A Sine on the Road to Mecca

By Dana Mackenzie

Ancient Muslim methods for finding the "sacred direction" for prayer

Ancient Muslim methods for finding the "sacred direction" for prayer

DOI: 10.1511/2001.22.0

Turn then thy face towards the Sacred Mosque: wherever ye are, turn your faces towards it....

For centuries, Muslims all over the world have obeyed this command from the Koran, facing Mecca five times a day for prayer. But for a Muslim who is thousands of miles from Mecca, finding the right direction to pray—the qibla, or "sacred direction"—is not so easy. It has even been a source of controversy. Some of the mosques in Cairo reflect two different qibla values at 10 degrees from each other, with the outside walls aligned to one and the inside walls to the other. In North America, some Muslims pray to the northeast, in the direction of the great-circle route (the shortest path along the planet's surface) to Mecca, whereas others pray to the southeast.

Christie’s, London

Medieval Muslims were using sophisticated mathematics to solve this problem centuries before the equivalent discoveries were made in Europe. At a time when Europeans believed that the Earth was flat, Muslim scientists knew how to correct for the Earth's curvature. Two recently discovered instruments have proved that Islamic mathematicians were even further ahead of their time than anyone knew. These Mecca-centered world maps, cast in brass, indicate the direction and distance to Mecca from any point in the medieval Muslim world, and they do so with a type of map projection that was unknown in the West until the 20th century.

"I had been working on the subject [of the qibla] for 20 years, and the discovery of these maps took me by surprise," says David King, a historian of science at the Johann Wolfgang Goethe University in Frankfurt, Germany. For the last decade King has been working to discover who made the maps and, more important, who designed them. All the evidence suggests that they were fabricated near Isfahan, in present-day Iran, during the Safavid dynasty (which began in 1502 and ended in 1722). However, King believes that the grid that is the maps' most distinctive feature must have been discovered centuries earlier.

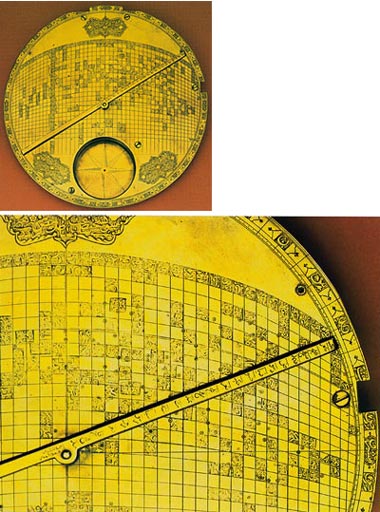

The first of the two maps surfaced in 1989, when it was auctioned at Sotheby's of London. An anonymous collector discovered the second one at a Parisian antique dealership in 1995. The two instruments are so similar that they may have come from the same workshop. They are about 9 inches wide and originally came with three attachments: a compass, a sundial, and a rotating pointer that indicates both the direction and distance to Mecca. The base contains a curved grid of latitudes and longitudes, with the latitudes represented by circles and the longitudes by vertical lines; more than 100 holes are punched into the bronze to indicate various locations. (Mecca is, of course, at the center.) Because the instrument was not meant for navigation, it looks like no map you have ever seen: There are no land forms, no rivers, no oceans.

"It's not surprising that they had the data to enter onto the grid, and the motivation [to find the qibla]," says Len Berggren, a historian of mathematics at Simon Fraser University in Vancouver. "What is surprising is that someone discovered the map projection to do it." Not only are the lines of latitude curved and the lines of longitude unevenly spaced—both unprecedented innovations in the Islamic world—but the spacing is precisely calibrated so that the distance to Mecca on the pointer is the sine of angular distance to Mecca in the real world. If the lines had been evenly spaced, the instrument would not have worked.

According to King, the artisans of Isfahan could never have come up with such a grid themselves; they were accomplished astrolabe makers, but not mathematicians. Therefore, they had to be copying an earlier model.

Where did the original model come from? King has some intriguing speculations. As early as the 9th century, Islamic astronomers had devised a method for computing the qibla that happened to produce, as an intermediate step, the sine of the distance to Mecca. The map projections might have been discovered at the same time. Indeed, King's colleague Francois Charette has shown that the grids are, in a sense, a translation of the equations into cartographic form. Alternatively, a later scholar who was familiar with the trigonometric method might have devised the map as an ingenious simplification. King suspects Abu 'l-Rayhan al-Biruni (973–1048), considered the leading scientist of medieval Islam, who lived in Ghazna (now Afghanistan) and wrote an influential and original treatise on the qibla.

Inevitably, less romantic possibilities have been suggested. The catalogue that Sotheby's printed when the first instrument went up for auction states: "The projection is of western European inspiration ... and this unusual instrument is interesting as evidence of the assimilation of European science and technology in Persia in the 18th century." King strongly disagrees with that interpretation, citing both physical and historical evidence. Even if European mathematicians had worked on the qibla-finding problem, he argues, they would not have stumbled on a solution that was directly inspired by a 9th-century Islamic formula. "The fact that the instrument uses the sine of the distance is, to me, the most compelling argument" for its early Islamic origin, King says. There is also no evidence that the European scholars who were in Persia at the time brought with them anything like a Mecca-centered world map. Even if they could have, they would not have wanted to: They were in Persia to convert Muslims, not to make it easier for them to practice their religion.

More clues to the origin of these instruments may yet come to light. "So many Arabic manuscripts lie not only unstudied but uncatalogued in the libraries of the world," Berggren says. They may contain descriptions of similar qibla-finding world maps, which went unrecognized before because historians didn't know what they were reading about. Says Berggren, "Not only do we know what to look for now, but we know it's worth looking."—Dana Mackenzie

Click "American Scientist" to access home page

American Scientist Comments and Discussion

To discuss our articles or comment on them, please share them and tag American Scientist on social media platforms. Here are links to our profiles on Twitter, Facebook, and LinkedIn.

If we re-share your post, we will moderate comments/discussion following our comments policy.