Whatever Became of Holography?

By Sean F. Johnston

The once-futuristic technology has less public glamour nowadays, but it still plays a current role in science

The once-futuristic technology has less public glamour nowadays, but it still plays a current role in science

DOI: 10.1511/2011.93.482

A generation ago, hologram exhibitions attracted hundreds of thousands of visitors in major cities around the world, and entrepreneurs confidently forecast applications in art, photography and television.

As holography became more ubiquitous, however, it lost some of its luster for public audiences as well as for professional scientists and engineers. But the technology still makes an impact today, although not with the same punch it had a quarter-century ago. Popular culture celebrates it through science fiction and a steady trickle of news reports about imminent consumer advances—although there are a number of modern instances where semi-transparent images of television announcers and pop stars are mislabeled as holograms, further confusing consumers who don’t understand what holograms are realistically capable of showing.

Jonathan Blair/National Geographic Stock

The field that once represented futuristic progress gradually has been recast as a fertile technique at the heart of modern science, and ongoing research continues to pay dividends. But the science and technology may now seem old-hat. Where the field of digital electronics offers real-time, high-definition displays and three-dimensional television, much of holography remains stolidly analog. Audiences once amazed by lasers and stunningly realistic holograms have become jaded in the face of ubiquitous and unremarkable credit-card icons. And yet the history of this field illustrates some of the best science and most impressive technology of the past two generations. How did holography get to this point, and where might it be going?

The answers are often surprising even to many of the participants in the field, because holography has been continually fragmented between disciplines as distinct as art and electronics research, with some of this separation dating back to the end of World War II. For a subject often deemed unintuitive, it has been frequently reinvented: The field and its possibilities were conceived afresh for waves of successive audiences. Although holography remains a magical and inspirational field ripe with possibilities, it also demonstrates the dangers of unrefined forecasting and rosy visions of progress. Beyond the enduring fascination of the subject itself, holography provides important lessons for understanding the cultural factors behind scientific advances and technological change.

Although it came to represent high technology, holography was born from unlikely sources. The origins of technologies can be notoriously difficult to trace, but in this case there were at least three separate threads, in three countries, woven together to create the new subject. Those threads were tangled with others that had been conceived a generation or two earlier, or borrowed from neighboring fields. Each played a role in transforming a staid and unpopular science—optics—into a revolutionary subject at the forefront of late-20th-century physics.

By the end of World War II, optics had become a routine, if highly sophisticated, engineering field. Geometrical optics—the science of lens, camera and telescope design—was at the heart of important national industries, especially following the demands of wartime production. But relatively few physicists saw their future in a domain with such a heavy emphasis on applied technology. Its high-science counterpart, physical optics—dealing with wave phenomena such as interference and diffraction—had become a major research field during the late 19th century, but it had generated relatively little subsequent momentum in research and few practical applications.

Illustration by Tom Dunne.

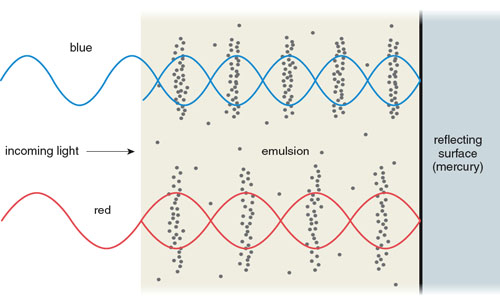

Only two such applications attracted much public attention. The first was an ingenious concept for color photography invented by French physicist Gabriel Lippmann during the 1890s. His technique used a fine-grained photographic emulsion to record light of different wavelengths as standing waves (where light waves traveling through the emulsion combine with their reflections from a mirror to produce maximum intensities at several fixed depths). Once developed, these various monochrome patterns forming the dark areas of the image would reflect and selectively reinforce (or in other words, add to the intensity of) the light of the original wavelengths to reproduce full color (see Figure 2).

The second clever concept was phase-contrast microscopy, a technique for imaging transparent specimens, which won its creator, the Dutch physicist Frits Zernike, the Nobel Prize in 1953. In Zernike’s technique, light waves transmitted by the microscope sample are delayed in transit and shifted in phase (so that the peaks of their waves occur at a different position) because of their slower speed in that substance. By comparing the transmitted light to a reference beam, the effects of sample thickness and optical density can be visualized. Zernike later applied such ideas to optical metrology, measuring the aberrations (or imperfections) of concave mirrors.

Neither of these techniques created much of a stir. Although Lippmann’s and Zernike’s insights linked high science to the relatively mundane fields of photography and microscopy, their relevance to the average person was little recognized. Lippmann’s elegant concept was awarded the 1908 Nobel Prize in Physics, but it proved relatively slow and painstaking to use, and frustrating to view. Zernike’s approach, although earning equally high kudos, remained a specialist’s tool employed mainly by biological and geological scientists. Neither seemed amenable to widespread utility. In his Nobel acceptance speech, Zernike wrote:

On looking back to this event, I am impressed by the great limitations of the human mind. How quick are we to learn, that is, to imitate what others have done or thought before. And how slow to understand, that is, to see the deeper connections. Slowest of all, however, are we in inventing new connections or even in applying old ideas in a new field.

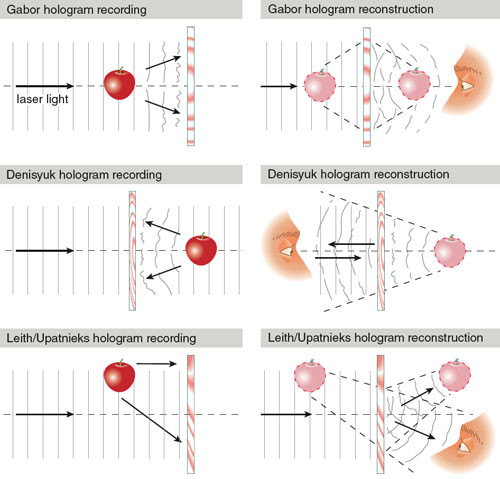

The three direct precursors of holography took inspiration from this previous optics research. All were equally ingenious, if disconnected and relatively unambitious at their beginnings. The first was an idea conceived in 1948 by Dennis Gabor, an ex-patriot Hungarian working in an English electrical- engineering firm. Gabor, a creative engineer at ease with mathematical physics but with no background in optics, devised a scheme for improving the imaging quality of the first generation of electron microscopes, which were then being manufactured by a sister company to his firm. His wavefront reconstruction technique was a two-step process (see Figure 3). First, the microscopic specimen scattered an electron beam (these can be understood as waves, just like visible light), which was recorded as a pattern on photographic film that he dubbed a hologram (from Greek roots, meaning “whole drawing”). Second, this hologram was put into a type of projection system to focus light diffracted by the hologram pattern into an optical image that would be viewed through an eyepiece.

Illustration by Tom Dunne.

The concept was clever, but far from elegant. Virtually incomprehensible to his contemporaries, including senior scientists such as Nobelists Sir Lawrence Bragg and Max Born, Gabor’s papers excited little interest from microscopists. By the mid-1950s his idea of holoscopy or diffraction microscopy was languishing, deemed a white elephant by the small industrial research group that had strived to make it worthwhile. They and a handful of other researchers discovered both practical and fundamental flaws, finding little to publish and nothing to commercialize. Gabor’s holograms required a monochromatic electron beam, but generating a constant- energy electron source that would be stable over the several minutes of exposure proved impossible. The resulting holograms had only one or two interference fringes (bright or dark bands where the overlapping waves either reinforced or canceled out), and they could not reconstruct a recognizable image. And, at best, his scheme would create two confused images: one a focused image of the original microscopic object, the other a fuzzy virtual image superimposed over it. Over the next decade, Gabor, having gained the post of Reader in Electronics at Imperial College based on the potential of his invention, instead turned to the more fertile research fields of television engineering and nuclear-fusion modeling.

The second novel conception appeared about a decade later in Leningrad, where a graduate student, Yuri Denisyuk, was pursuing an advanced degree in physics after having worked for a few years on rather traditional problems at the Vavilov State Optical Institute. Denisyuk was inspired by the goal of recording or reproducing the full characteristics of a wavefield of light. He imagined capturing a wavefront of light at an arbitrary position in space—just as human eyes do—so that a full-color 3D scene could be visualized. Broader than the ideas of Gabor, Denisyuk’s notions generalized aspects of Lippmann’s photography in a dramatic new form, although its practical implementation was limited. He recognized that an arbitrary wavefront of light could be recorded as a standing wave in a photographic emulsion by using monochromatic light, and that this recorded pattern could subsequently reflect light from the plate to recreate that wavefront. Denisyuk’s concept avoided the fundamental flaw of overlapping images, but he faced practical problems similar to Gabor’s. Visible light sources such as optically filtered atomic-emission lamps (which sequestered a purified element, such as sodium or tungsten, inside a bulb, then electrically stimulated it to emit characteristic wavelengths) were dim and far from adequately monochromatic. He was able to create “wave photographs” that acted like shallow curved mirrors or reflective flat rulers, but little of immediate practical value. To make matters worse, his contemporaries discounted both his theory and results, and Denisyuk returned to more mundane work by the early 1960s.

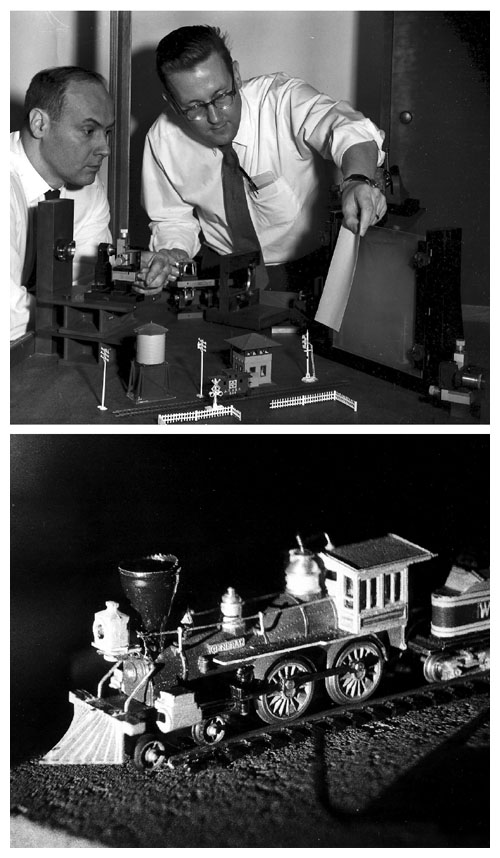

Photographs courtesy of Juris Upatnieks.

The third clever idea had even less connection with traditional optics. In the 1950s at Willow Run Laboratories— a classified research-and-development facility of the University of Michigan, dedicated to military contracts—the young electrical engineer Emmett Leith was assigned to a project designing new radar systems. Leith was working on ways of processing data from what became known as side-looking or synthetic aperture radar (which uses an airborne antenna to acquire multiple echoes of the ground that are combined to create a signal which, if plotted, looks remarkably like a hologram). To decode this signal pattern into a radar image of the terrain, his electrical-engineering colleagues envisaged a complex electronic system of magnetic-tape recording and analog filtering (using capacitors, inductors and other electrical components to control which types of waves could pass through), possibly with the aid of the relatively primitive digital computers then becoming available. Between 1954 and 1959, however, Leith and his colleagues gradually reconceived a solution using an optical processing system. In effect, they merged the mathematical theories of physical optics with that state-of-the-art communication theory that was growing in postwar electrical engineering. The synthesis was powerful and generic. This new applied science—also being explored independently by a handful of French investigators of the period—mixed physical optics, electrical theory and information processing to create optics with muscles.

Military security prevented publication. As Leith was later to write, “holography really did not shrink in the 1955–62 period as one would erroneously conclude from the open literature. In a manner of speaking, it went underground.” But Leith used his spare time to explore the new synthesis further, especially after he happened upon Gabor’s work. By 1961, he and his colleague Juris Upatnieks had discovered theoretical and practical ways of solving Gabor’s problems, and had demonstrated ways of encoding, and then reconstructing, an image on a photographic transparency by the two-step hologram process. They solved the issue of overlapping images by applying communication theory. The interference pattern of the hologram could be understood as the mixing of two signals, a reference wave (the original monochromatic wave) and a sample wave (a portion of the wave that was perturbed by diffraction or reflection from the object). Just as radio communications rely on time-varying waves, holographic information relies on spatially-varying patterns of the hologram. The two images—amounting to sidebands (the “spillover” signal when information is encoded in a wave) of the reference wave’s carrier frequency—could be separated simply by offsetting the reference and sample beams.

Leith and Upatnieks’ method and analysis, divorced cleanly from their classified work, was named lensless photography by the American Institute of Physics, and it began to make small ripples among American researchers. But although their method worked elegantly for two-dimensional transparencies, they, too, were limited by the available light sources. A bigger splash resulted in 1963 when they substituted a newly available laser as their filtered light source to record and reconstruct the image of 3D objects. Although it had been predicted in principle, the uncanny realness of that image amazed all who saw it: Unlike Victorian stereopticon slides and 1950s 3D movies that required special glasses, the new laser holograms were indistinguishable from windows overlooking a 3D tabletop scene. Over the next two years, the technology rippled out to engineers and scientists around the world.

By 1966, similarities between the work of Gabor, Denisyuk, and Leith and Upatnieks were being recognized, and the expanding field became known as holography. Although the trajectory of the research was aligned closely with American developments, Denisyuk’s work was rehabilitated at home and abroad. Soviet research in the field was officially supported, in part, for its value in Cold War rhetoric (indeed, Denisyuk and Leith each later discovered that much of their subsequent research for military sponsors paralleled the other’s). And Gabor’s self-assessed failure, too, was repealed when he was the sole winner of the 1971 Nobel Prize in Physics, a decision that raised much contention in the field at the time. From an unpromising novelty 20 years earlier, holography had become a competitive field divided by disputes over whose work had priority among the numerous investigators who took up the subject.

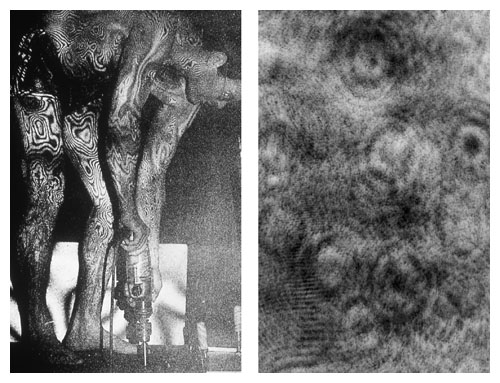

What had been a theory-driven and narrow application became an experimental subject with limitless horizons. From the mid-1960s, a jostling assortment of university and industrial labs explored holography to decide what holograms were good for and how they could be improved. Publications on the new subject had expanded 25-fold by the end of the decade, and companies sprouted up to exploit the commercial possibilities. Willow Run Labs, now lubricated with nonclassified contracts, had a head start. Working in pairs, its staff separately invented techniques of holographic interferometry for visualizing dimensional changes in specimens. This method allowed strains such as temperature expansion and mechanical deformation to be quantified empirically. It could also reveal the precise surface dimensional contours and mechanical vibration modes of engineering samples for use in building structures or machinery, or even visualize subtleties of airflow in aviation studies. Similar results were discovered independently at widely scattered labs as researchers scrambled to try novel optical configurations, exposure regimes and processing techniques. The immediate beneficiaries were mechanical engineers, interested in assessing designs in ways that were beyond conventional mathematical analysis, and metrologists, for whom measurements made by holography provided unimagined sensitivity and versatility. In one of the first commercial spinoffs, some Willow Run personnel formed GC-Optronics, specializing in the holographic testing of aircraft components to reveal lamination flaws in tires and welding faults in panels. The customers of choice—just as they had been for Leith at Willow Run and Denisyuk at the Vavilov Institute—continued to be government clients.

Image at left courtesy of Lennart Svenson; image at right courtesy of the author.

Beyond such fortuitous developments, participants began to seek commercial applications more actively. Another early Willow Run offshoot, for example, was Conductron Corporation. Using the funding strategies that had worked with government sponsors, the company courted investors by promoting holography as a technology that would inevitably progress. It aggressively encouraged novelty, seeking to extend holography’s capabilities. During the late 1960s its engineers produced large demonstration holograms for automobile companies and mass-produced holograms as inserts for a science encyclopedia. The company developed a pulsed laser (a bit like a sophisticated camera flash) that for the first time made it possible to capture holograms of living people (previously, inanimate objects were used because the subject had to remain completely still), and promoted them as eye-catching displays for advertisers. It created full-color holograms by combining red, green and blue lasers for recording and viewing, and it devised equipment for showing brief holographic animated movies. An eminent showman as well as a physicist, Conductron’s founder Kip Siegel touted an impending commercial future for holograms of all kinds. To shareholders, employees and potential investors, he promised that the United States would have the only holographic television, cinema and home movies within a decade, and he publicly urged his staff toward the goal of holographing the next Olympic Games.

More broadly, technologists began to make holography familiar. The early years of the subject began to be portrayed as analogous to the history of photography. The first equipment was expensive, required long exposures and was technically limited; within a few years, faster, better, cheaper and more varied holograms were becoming available; and the expertise was percolating downward from scientists to engineers to entrepreneurs. In this view, holography seemed guaranteed to conquer the commercial sphere and to become integrated into every aspect of modern life, just as photography had done.

Siegel’s portrayal of holography as a future consumer industry relied on an implicit faith, widely shared by many of his contemporaries, in scientific, technical and economic progress. Yet his claims far exceeded the capabilities and expectations of his engineers, and the commercial predictions diverged widely from the most optimistic technical extrapolations.

At the heart of these progressive visions was a series of remarkable inventions and the confidence that they could be extended in the years to come. The Leith-Upatnieks hologram fascinated its first viewers and was rapidly scaled up from tiny 1-by-5-inch tests to 11-by-14-inch laser-lit windows into virtual space, offering views of objects literally yards deep. In 1965 its sibling, the double-exposed holographic interferogram, compared two holograms of the same object, one before and one after alteration, to reveal microscopic engineering effects to the naked eye. With suitable arrangements, holographic nondestructive testing could even be done in real time, allowing engineers to quantify a material’s movements in fractions of a wavelength. Starting in 1967 pulsed-laser holograms could do all this for transitory phenomena—allowing leisurely analysis of 3D bullet impacts, fuel mixing or cloud formation. New varieties of holograms were devised with encouraging regularity.

Holograms created by the Multiplex Company; photographs courtesy of Jonathan Ross.

Denisyuk’s papers, like Soviet research in other scientific fields, were routinely translated into English but went largely unrecognized in the West. Thus at least three American groups independently rediscovered and claimed to be the first to develop reflection holograms. Like Lippmann’s photographs, this type of hologram can reconstruct a single- or multiple-color image from a white-light source. This ability meant that Denisyuk holograms (known as Lippmann or reflection holograms to American specialists of the period), although recorded with a laser, could be viewed solely in white light, and yielded a clear 3D image behind a plate. Exposure could also be done remarkably simply, by placing the photographic plate in front of the object to be recorded and then illuminating them both directly with a single laser beam. Engineers didn’t see much promise in such products for metrology, but for Soviet museums seeking to make their collections available to widespread audiences, Denisyuk holograms proved increasingly popular for traveling exhibitions from the 1970s onward.

There were other forms of white-light holograms, too. The simplest was recorded by using a lens to focus an image of the object in the plane of the hologram plate. This image-plane hologram, explored increasingly from 1966, could be viewed with a white-light source to generate an image that seemed to straddle the plate, appearing somewhat fuzzier at points farther from the surface. With a shift of optical arrangement, the image could even be made to appear in front of the hologram plate, hanging in space while evading viewers’ hands. Year by year, the element of awe was refined and extended: The unsettling lifelikeness of the Leith-Upatnieks hologram became even more eerily uncanny in such projected-image holograms. Such real-world improvements were accompanied by popular anticipation that begged for them to be extrapolated into the certainly impossible Star Wars holograms of 1970s cinema and the holodecks in Star Trek: The Next Generation of 1980s television, both of which projected a holographic image away from the viewer without the use of physical plates.

Despite these unrealistic expectations, new discoveries continued in laboratories. An important new variant was developed by Stephen Benton of Polaroid Corporation (and later the Massachusetts Institute of Technology) in 1968 and publicized during the early 1970s. Popularly called the rainbow hologram, it was recorded with an additional optical component: a horizontal slit that limited vertical parallax, the ability to look over foreground objects to see behind them. When the hologram was viewed subsequently in white light, the slit image was smeared into a spectrum of wavelengths, and the object viewed through it was revealed in a series of primary colors. With the use of clever multiple exposures—which were explored more by artists than scientists—such a hologram could synthesize a full-color image viewable in white light.

The final major addition to the toolbox of holography during the 1970s was the holographic stereogram, developed commercially by physicist Lloyd Cross. In its simplest form, this image is a series of Leith-Upatnieks holograms arranged as vertical strips. If the hologram is curved into a cylinder, each eye will view the image through different strips to visualize a 3D scene. The advantage of a stereogram is that each hologram can be recorded from a 2D frame of movie film, and the movie itself can be filmed in the conventional way. With suitable movement of the movie camera, a hologram can be synthesized from arbitrary footage, thus liberating 3D hologram scenes from the laboratory and the need to use expensive and dangerous high-powered lasers to view them.

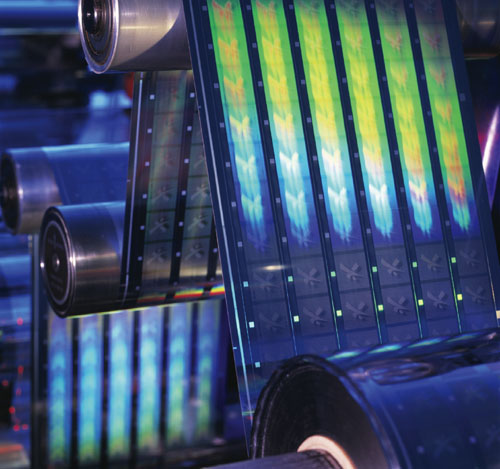

During the early 1980s, holograms underwent their greatest transformation. Methods of embossing plastic film or foil—essentially a hot-stamping process—created low-cost reflective holograms from masters that could be either of the image-plane or rainbow types. The innovation brought these relatively low-quality but impressively 3D images to a wide range of new uses: magazine advertising, packaging and anticounterfeiting devices. Holograms were everywhere.

Holography affected broader audiences than most new scientific fields. By the late 1960s, the subject was becoming accessible not just to scientists and engineers, but also to other niche users, enthusiasts and the wider public. The first small public exhibitions of holograms took place in university physics labs, and newspapers and magazines such as Life and Popular Mechanics described the work of Leith and Upatnieks to an eager public. Scientific American not only carried articles on the new science but explained to amateur scientists how to record and view their own using homemade lasers.

Pascal Goetgheluck/Photo Researchers, Inc.

By the end of the decade, a handful of artists had been attracted to the technology. Although the spectacle of holograms awed early audiences, their subject matter—usually still-lifes improvised from laboratory apparatus or knick-knacks—quickly paled. Aesthetic exploration of the new medium offered unique opportunities. Holograms were particularly intriguing for artists associated with the Art and Technology and Science and Art movements that were promoted by enthusiasts straddling these two cultures, such as electrical engineer Billy Klüver at Bell Telephone Laboratories. Others made comparisons between the potential of artistic holography and the work of Andy Warhol and Robert Rauschenberg, who experimented with mixed media. Early cooperation between scientists and artists fostered art holography. Among the first artists were Bruce Naumann, who used the pulsed laser at Conductron to record his facial grimaces; Margaret Benyon in England, working initially in a physics lab at the University of Nottingham; Harriet Casdin-Silver, borrowing facilities at American Optical Research Labs in Massachusetts; and Carl Fredrik Reuterswärd, who picked up technical expertise from scientists and engineers at the University of Uppsala, the Institute of Technology in Stockholm and the University of Lausanne.

Such aesthetic innovators initially displayed their work to small and often bemused audiences. A greater impact was made by craft-oriented artisans, many of whom began with both technical backgrounds and artistic leanings. Their pioneer was Lloyd Cross, an ex-Willow Run technologist who successively started one of the first laser companies, joined Conductron, invented light-show apparatus and then coproduced one of the first public holographic art shows, N- Dimensional Space, at the Finch College Museum of Art in New York in 1970. He recalled imagining holography as “a craft, an art or a trade” in 1968, an uncommon idea at a time when it was still an elite activity of well-funded professionals.

By 1971, Cross had moved with artist associates to San Francisco, where they set up a School of Holography for the general public, beginning a technical commune that hosted waves of participants over the next few years. The Bay Area school provided a countercultural vision of holography, linking the techniques and products with personal fulfillment, altered consciousness and even drug experiences. The members developed inexpensive equipment that could be constructed, assembled and dismantled for moving to the next rented basement, liberating the subject from the exclusivity of science labs and democratizing access to the technology. The group was soon paralleled by other holography schools in New York, Chicago and, by the late 1970s, around Europe, too, fostering a generation of artists.

These scientists, engineers, artists and artisans began to identify themselves as holographers, and they proselytized for the new field. Despite the decline of corporate experiments in holography during the 1960s, public exposure to holograms grew exponentially during the late 1970s, when some 500 exhibitions brought the medium to the attention of critics and the public. The large shows created a consumer demand for holographic art. The artisans founded a cottage industry to manufacture, sell and display popular-art holograms. But by the late 1980s, the artform’s popularity was beginning to wane. Ironically, the falling appeal was associated with holograms’ widespread availability and the consequent trade-offs in quality. The bright and colorful Benton and image-plane holograms offered new aesthetic possibilities but they held less awe for audiences who no longer associated them with lasers and progress. And when embossed holograms began to appear in shimmering magazine advertisements, toothpaste packaging and credit-card authentication foils, the artistic market appeared to be doomed.

Such mixed fortunes are not uncommon in science and technology, but they are often quickly forgotten. In some respects, holography’s declining artistic and consumer interest parallels that of other 3D media. Stereoscopes were a Victorian wonder commercialized into a consumer fad, which was extended into the early 20th century by color printing and was later repackaged as the View-Master, a 1930s Bakelite and 1960s plastic children’s toy. In the same way, holograms had a commercial trajectory that started as a gallery phenomenon, then became an art form for the home and eventually were relegated to children’s sticker books. The technologies shared a pattern of popular acclaim, declining interest, niche novelty and transformation for the juvenile market. Cultural memories endure longer, but not necessarily with accuracy. Most mentions of holographic imaging today actually refer to much simpler processes that simulate only a few properties of holograms. (Moving 3D images of people are often mislabeled as holograms, one famous example being a runway-show projection of model Kate Moss by fashion designer Alexander McQueen. Such images are actually created using an old conjuror’s trick involving an offset mirror’s reflection of a video.)

Photograph courtesy of Zebra Imaging.

Such downturns in public favor can afflict scientists, too. Holographers have seen the appeal of their subject rise and fall among their peers. During the late 1960s, holography seemed a rich subject with unlimited possibilities. But the overlapping of research efforts during the field’s most active phases led to repetitive studies, a glut of publications and confused intellectual ownership (this muddled the patent situation as well, hampering commercialization). And as prognostications and contract promises often failed to pan out, the subject periodically developed a bad reputation among technical professionals. As a result, terminology occasionally shifted to insulate new research from old associations, making the evolution of the field harder to discern for analysts and participants alike.

Yet holographic research and development continues more quietly today, some of it along lines that originated half a century ago. Over the past decade, the merging of computers and holograms has been increasingly successful. Holograms can now be synthesized with increasing speed by computer calculation and either recorded on film (as produced by the Texas company Zebra Imaging) or created in real-time by electro-optical devices that typically combine computers with components such as liquid crystals and transducers (as demonstrated by the MIT Media Lab). Calculations remain prodigiously difficult despite rising computer power, and the real-time transmission of high-quality digital holograms— the old goal of holographic television—continues to face unresolved technological bottlenecks.

Another long-term goal—dense storage of data via holograms in materials such as photopolymers and crystals—has also seen progress. Such systems can potentially far exceed the storage capacities of current opto-electronic technologies such as DVD-ROMs, but they cannot yet be rewritten as efficiently as magnetic disks.

Although holograms are now less visible to the public, they are widely used as components that affect daily life. Security holograms continue to evolve rapidly to keep one step ahead of counterfeiters. Holographic elements can combine optical properties such as reflection, focusing and magnifying, and they have become an important feature of increasingly sophisticated aircraft visual displays, automotive lighting and laser barcode scanners. Holograms can also serve as key components in detection instruments by interacting with chemicals that alter their optical properties.

Even more generically, Emmett Leith suggested that holography continues to spawn new fields—which he groups as transholography—that may lose sight of their origins. Examples include phase conjugation (for the correction of optical wavefronts passing through distorting media, such as a telescope’s images of outer space through the Earth’s atmosphere) and speckle interferometry (applied to metrology and high-resolution astronomical imaging, where it corrects for atmospheric disturbance of images by taking a series of millisecond snapshots and computationally combining the results). And the holographic principles underlying inventions from Lippmann photography to embossed holograms are now applied successfully to other waveforms—including the electron holography conceived by Gabor some 65 years ago. Holography innovations go on behind the scenes, rather than in the limelight, to develop new applications and innovations.

Click "American Scientist" to access home page

American Scientist Comments and Discussion

To discuss our articles or comment on them, please share them and tag American Scientist on social media platforms. Here are links to our profiles on Twitter, Facebook, and LinkedIn.

If we re-share your post, we will moderate comments/discussion following our comments policy.