This Article From Issue

January-February 2005

Volume 93, Number 1

DOI: 10.1511/2005.51.0

Cogwheels of the Mind: The Story of Venn Diagrams. A. W. F. Edwards. xvi + 110 pp. The Johns Hopkins University Press. $25.

Every high school graduate has been exposed to Venn diagrams. Few, however, know anything about their originator, John Venn, or about the interesting and beautiful mathematics that arises from the consideration of Venn diagrams with more than a few sets. There is no better place to start than with Cogwheels of the Mind, by A. W. F. Edwards.

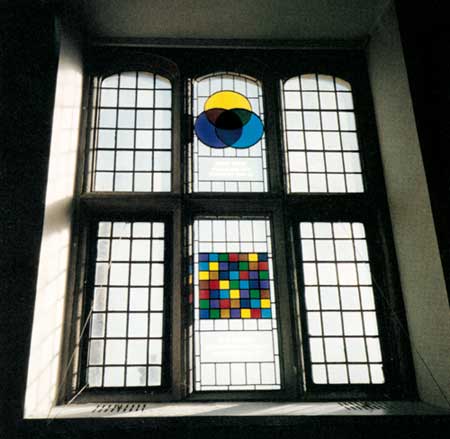

The author, who was the last undergraduate student of the famous statistician R. A. Fisher at the University of Cambridge, proposed in 1988 that the centenary of Fisher's birth be commemorated with a pair of stained-glass windows—one for Fisher and one for Venn—in the Hall of Gonville and Caius College, where both men had been Fellows (a title Edwards also holds). This project stimulated Edwards's interest in Venn diagrams. He then went on to discover an important class of Venn diagrams as well as several rotationally symmetric Venn diagrams.

From Cogwheels of the Mind.

But what exactly is a Venn diagram? Pick up a pencil and draw a curve that meets itself only where the pencil tip first touched the paper; this is curve number 1. This curve divides the paper into two parts, the region inside the curve and the region outside it. Repeat the process, for curves 2, 3 and up to n, and label each region according to the identifying numbers of the curves that enclose it. Now imagine taking a sharp knife and cutting along each of the curves. If 2 n pieces of paper result and each one has a unique label, then what you had before the cutting started was a Venn diagram. Most readers will be familiar with the classic Venn diagram formed of three interlocking circles (as shown in the photograph below of the stained-glass window dedicated to Venn), which create eight distinct regions by the process just described.

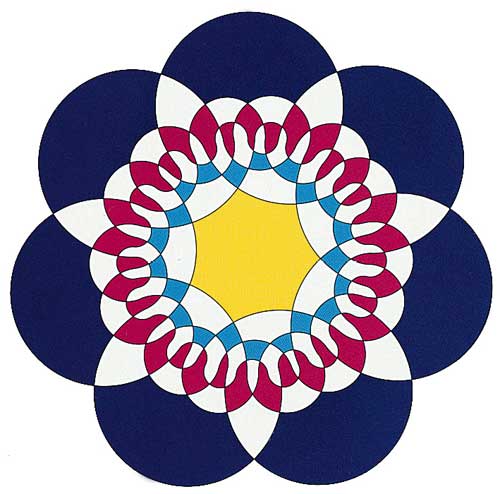

Edwards begins by presenting a most interesting history of Venn diagrams and then discusses some of his own discoveries, such as the fact that a very nice sequence of Venn diagrams results from successively applying the rule of subdividing the regions adjacent to the first drawn curve. Venn's own general rule was that one subdivides the regions adjacent to the most recently added curve.

From Cogwheels of the Mind.

Edwards presents an extremely personal view of Venn diagrams. He devotes the majority of the book to explaining how he became intrigued by the diagrams and discovered some of their fascinating properties. The volume is lavishly illustrated in color and even reproduces Edwards's "scratch work" pertaining to his research. However, the book contains only one diagram that is attributed to a living researcher other than Edwards: Branko Grünbaum's symmetric diagram of five ellipses. So do not expect a balanced overview of recent research on Venn diagrams.

I have a few minor quibbles with some of Edwards's choices. For example, he talks in the second chapter of drawing "an endless line on a piece of paper so that it cuts itself any number of times." It would be better to call it a curve and mention that it is closed. Figure 3.2 shows a "corrected redrawing" of C. S. Peirce's attempt to draw a seven-set Venn diagram. It would have been more helpful to the reader to show Venn's construction for seven curves and thus illustrate what Venn referred to as its "comb-like" structure. There are several places where Edwards seems to be implying incorrectly that Venn did not have a general method for constructing Venn diagrams.

In the third chapter, Edwards states that "every n-set Venn diagram constructed by the sequential addition of sets has the same structure when considered as a mathematical graph." I am not sure what he intended by this statement, but it seems incorrect, because it is certainly the case that for both Venn and Edwards the general method of constructing diagrams is "by the sequential addition of sets," and yet their diagrams are distinct as mathematical graphs whenever the number of curves is greater than four.

This book is aimed at readers interested in recreational mathematics,who will particularly enjoy Edwards's discussion of the connections with such classic topics as binomial coefficients and Gray codes and his references to personalities such as Lewis Carroll. In spite of my few reservations about the book, I heartily recommend it for readers interested in knowing more about John Venn and the geometric properties of Venn diagrams. It will also be appreciated by those interested in the process of mathematical discovery.—Frank Ruskey, Computer Science, University of Victoria, British Columbia, Canada

American Scientist Comments and Discussion

To discuss our articles or comment on them, please share them and tag American Scientist on social media platforms. Here are links to our profiles on Twitter, Facebook, and LinkedIn.

If we re-share your post, we will moderate comments/discussion following our comments policy.