This Article From Issue

September-October 2021

Volume 109, Number 5

Page 315

DO NOT ERASE: Mathematicians and Their Chalkboards. Jessica Wynne. With an afterword by Alec Wilkinson. 227 pp. Princeton University Press, 2021. $35.

Chalk is the fossil fuel of modern mathematics. It was formed in the Cretaceous period, roughly 100 million years ago, when the seas swarmed with foraminifera and other planktonic organisms whose calcium-rich skeletons accumulated in thick beds of the soft, white stone. Now the chalk is quarried, refined, and pressed into crayon-size sticks that mathematicians delight in stroking across smooth slate. A chalkboard is the preferred medium of expression for many kinds of mathematical discourse: solitary ruminations, teaching, presenting work to colleagues, collaborative research sessions.

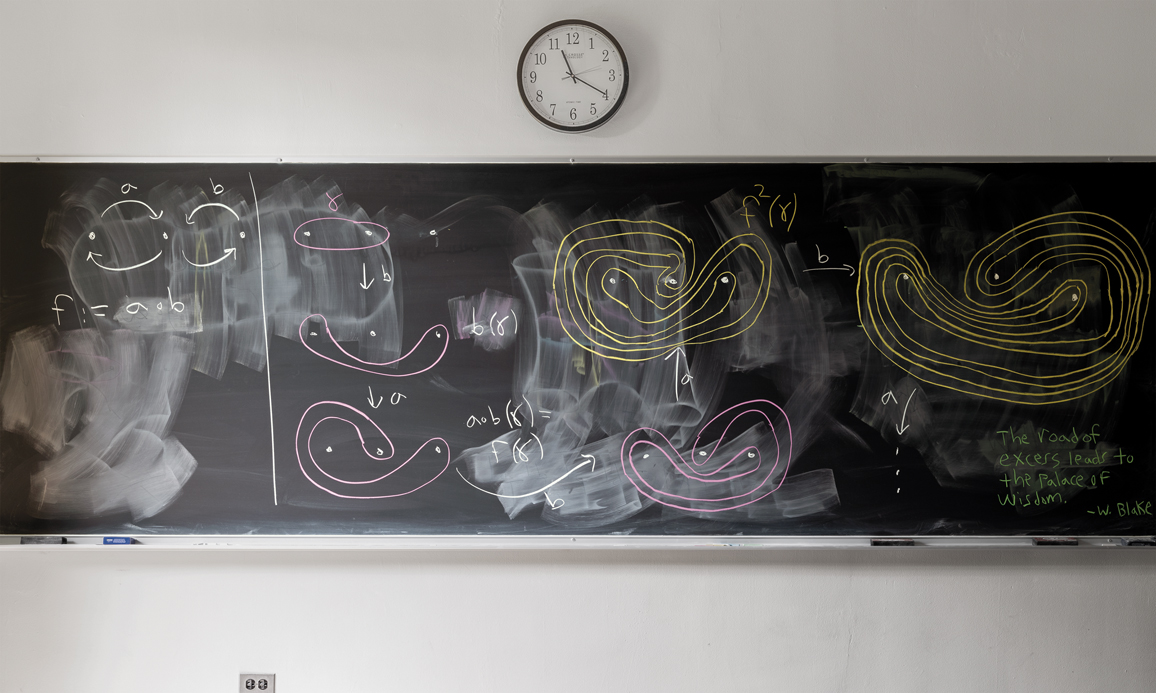

Do Not Erase presents more than 100 specimens of the mathematical chalkboard, in color photographs made by Jessica Wynne, who is on the faculty of the Fashion Institute of Technology in New York. Each photograph occupies a full page. The facing page holds a capsule biography of the mathematician whose work is on exhibit, and a few paragraphs of commentary or explanation.

Photograph by Jessica Wynne. From Do Not Erase.

Some of the chalkboards were clearly produced specifically for the occasion of the photographer’s visit, but most of them are candid records of recent or ongoing work. The photographs generally show the entire board and little else—perhaps a chalk tray, an eraser, or the “Do Not Erase” placard that gives the book its title. For each photograph, the camera has been placed squarely in front of the chalkboard, accentuating its rectilinear geometry. As Wynne herself puts it, “I photograph in a literal, objective, straightforward way—showing the chalkboards as real objects—capturing their texture, erasure marks, layers of work, and all forms of light reflecting off their surfaces.”

Conspicuously absent from the images are the mathematicians who made all those white-on-black squiggles. No faces or hands stray into the frame. This austere aesthetic can be frustrating at times; we would like to see the artist behind the work, or better yet the artist at work. Nevertheless, I think Wynne chose the right strategy. She forces us to look at the chalkboards themselves, to see them as documents or artifacts, without the irresistible distraction of human presence.

Among the unseen mathematicians are many celebrities, including five recipients of the Fields Medal, which is typically described as the mathematical equivalent of a Nobel Prize. But I am delighted to report that there are also lots of grad students and postdocs and junior faculty, whose blackboard scribblings are every bit as interesting as those of the illuminati.

Wynne came to this subject not as an adept or an aficionado of mathematics, but through an accident of geography: Her summertime neighbors on Cape Cod are Amie Wilkinson and Benson Farb, mathematicians at the University of Chicago. One day she found it intriguing to watch Farb work for hours in his notebook on a complex problem. Later she visited Jaipur, India, where she photographed elementary school blackboards filled with lessons in the Hindi language. Looking at the photos on her return, she was reminded of the mathematical symbols in Farb’s notebook. What the Hindi and the mathematics had in common was their inscrutability to someone from outside the culture. She was excited by these patterns that both drew her in and pushed her away, and thus was launched a project. She set up her tripod in departments of mathematics at two dozen American universities as well as a few institutions farther afield—in Paris and its suburbs, and in Brazil.

“Inscrutability” is a word that may well cross the reader’s mind when looking at some of these images, where dense thickets of Greek and Roman letters sprout superscripts and subscripts. Often, however, there’s at least a hint of sense and substance, something for the viewer to grab hold of—a revealing diagram, or perhaps a few lines of explanatory text amid the bristling equations.

Are we now living through the last great days of chalkboard culture?

Blackboards were once standard equipment in all kinds of classrooms and academic environments. Chemists drew their molecules with chalk, and grammarians diagrammed their sentences. But most fields have moved on, willingly or not, to whiteboards or to PowerPoint. The mathematics community is the last holdout, clinging stubbornly to their dusty, distinctly old-fashioned chalkboards.

The mathematicians quoted in this volume are proud of that recalcitrance. They praise chalk in terms of “tactile experience” and “sensual pleasure.” The chalkboard is a fluid and informal medium of expression, they say. If you change your mind about something, you can smudge out a symbol with the heel of your hand. Impermanence becomes a virtue. Nathan Dowlin of Columbia University writes that “on a chalkboard the idea can evolve gradually, the way it does in your mind. There is no pressure to get it perfect the first time, or even to get it right, since it’s going to be erased in an hour or two anyway.”

On the subject of erasure there’s this further comment from Virginia Urban of the Fashion Institute of Technology: “A blackboard has a special quality—while incorrect or discarded ideas are easily erased, the haze is still visible as a reminder of the work that went into arriving at the solution.”

Chalk is even praised for slowing the pace of mathematical work. When giving a “chalk talk,” a mathematician can go no faster than he or she can write equations on the board. Paul Apisa of Yale University explains: “A virtue of chalk, and talks that use it, is that it checks the Icarian desire of a speaker to communicate too much, heedless of the capacity of the listeners to comprehend.”

A few of the comments even suggest that without chalk, mathematics itself might be in jeopardy. “The chalkboard is the glue that holds together this community and its rituals,” writes Nicholas G. Vlamis of Queens College of the City University of New York. Benson Farb declares, “Chalkboards are a major part of my life. I couldn’t live without them.” In these statements I hear a note of anticipatory nostalgia, born of the fear that we are now living through the last great days of chalkboard culture. And it may be true. Natural slate boards are hard to come by, and the mathematicians’ favorite brand of chalk, called Hagoromo Fulltouch, was unavailable for a while a few years ago. The writing is on the wall, so to speak.

Peter Woit of Columbia University takes an optimistic stand: “I’m willing to bet that a hundred years from now, mathematicians will still be using chalk and chalkboards.” I don’t share his confidence, but I do have faith that a hundred years from now mathematicians will have an effective way to communicate and collaborate, whether or not it involves fossilized foraminifera. Whatever the medium might be, I hope it can also provide those sensual and tactile satisfactions enjoyed by ardent chalkophiles. Perhaps it will even lend itself to a sumptuous book of fine photographs— assuming that medium survives.

American Scientist Comments and Discussion

To discuss our articles or comment on them, please share them and tag American Scientist on social media platforms. Here are links to our profiles on Twitter, Facebook, and LinkedIn.

If we re-share your post, we will moderate comments/discussion following our comments policy.