Nature Is Dead. Long Live Nature!

By Robert J. Cabin

Our view of what constitutes the natural world has evolved and, as restorative conservation efforts on the island of Hawai’i show, must continue to do so.

Our view of what constitutes the natural world has evolved and, as restorative conservation efforts on the island of Hawai’i show, must continue to do so.

DOI: 10.1511/2013.100.30

An idea, a relationship, can go extinct, just like an animal or a plant. The idea in this case is “nature,” the separate and wild province, the world apart from man to which he adapted, under whose rules he was born and died. —Bill McKibben

In his best-selling and influential 1989 book The End of Nature, Bill McKibben surveyed nature and found it dead. “By the end of nature,” he wrote, “I do not mean the end of the world … I mean a certain set of human ideas about the world and our place in it.” For McKibben, the nail in nature’s coffin was human-induced climate change: “By changing the weather, we make every spot on the earth man-made and artificial.” His pronouncements were boldly stated, but they reflected the conclusions of many ecological thinkers at the time.

The years since the publication of The End of Nature have proved McKibben’s prescient warnings about the escalating consequences of human-induced climate change depressingly accurate. But was his vision of the death of nature similarly prophetic—or greatly exaggerated?

Photograph courtesy of Dan Culver/Wikimedia Commons.

Hawaii’s many paradoxes provide a globally important microcosm in which to examine this question. Although it represents a mere 0.2 percent of the land area of the United States, this archipelago contains all of Earth’s climates, most of its ecosystems and some of its most diverse human communities. Despite Hawaii’s relatively low overall biological diversity, three-quarters of all of the bird and plant extinctions in the United States have occurred there, and the islands now contain more endangered species per square mile than anywhere else in the world. Of the 59 endangered species listed by the Obama administration in the past two years, 48 have been Hawaiian plants and birds.

I first landed in Hawaii in 1996 on a postdoctoral fellowship at the National Tropical Botanical Garden on the island of Kauai. Throughout this fellowship, and later as a research ecologist for the U. S. Forest Service, I was immersed in the scientific and conservation communities’ efforts to better understand and conserve the islands’ remaining native species and ecosystems. My ideas about nature and conservation were irrevocably changed as I transitioned to doing more applied research. That work led me to interact and collaborate with the numerous stakeholders involved in our on-the-ground efforts to preserve and restore Hawaii’s remaining “natural” areas. Environmentalists can be understandably impatient with people they perceive as slow to act on conservation issues: How can they while away their time pondering esoteric topics such as the nature of nature while our planet is going down the tubes? After spending more time in what often felt like the triage room of Hawaiian conservation, I sometimes felt like shaking such people and yelling, “Wake up and do some real work before it’s too late!”

Over time, however, I became increasingly less sure about exactly what real work we should be doing. Which species and ecosystems should we focus on, and what should we do to them? Why do so many seemingly like-minded people within the conservation and scientific communities often disagree with each other so passionately? The evolution of my thinking is well expressed by the environmental historian William Cronon, who edited the 1996 book Uncommon Ground: Rethinking the Human Place in Nature. In the book’s introduction, he astutely observed:

At a time when threats to the environment have never been greater, it may be tempting to believe that people need to be mounting the barricades rather than asking abstract questions about the human place in nature. Yet without confronting such questions, it will be hard to know which barricades to mount, and harder still to persuade large numbers of people to mount them with us. To protect the nature that is all around us, we must think long and hard about the nature we carry inside our heads.

Our mental images of the “real nature” of a particular place are created in part by our knowledge of and perspective on that area’s history. The Hawaiian archipelago’s vast prehuman history ended and its prehistoric period began when Polynesian sailors first reached the islands around 1,000 years ago. Hawaii’s modern era abruptly began when Captain James Cook accidentally “discovered” the islands in 1778. Like the Polynesians before him, Cook and his successors deliberately and unintentionally brought many new species to these remote islands—species that never could have colonized them on their own. The effects of these human interactions with the Hawaiian landscape continue to create puzzles for conservationists and environmental philosophers alike.

Today, some see Hawaii’s prehuman era as the epitome of McKibben’s idea of a pure, “uncontaminated” nature. Those with a Romantic bent may picture this time as a golden age in which a rainbow of delicate species and pristine ecosystems coexisted harmoniously in a perpetual Eden. Modern ecologists, by contrast, view the prehuman world of Hawaii and everywhere else as being neither harmonious nor unchanging. For instance, we know that “natural disasters” such as volcanic eruptions and tsunamis caused enormous physical and biological changes throughout this period. The arrival of some new species also drastically altered the islands’ ecology and caused the extinction of species that had arrived before that time.

Jack Jeffrey

If we consider the early Polynesians as being separate from nature, it follows that they were the first nonnative species to reach Hawaii. Consequently, if our goal is to restore these islands to their natural condition, we should strive to erase as much of these humans’ footprint as possible. But if we view these people as being as natural as the species that colonized the islands before them, trying to erase their presence would be as misguided as trying to remove a native bird and to negate the countless ways this species directly and indirectly affected the islands. Similarly, if we see Cook and his successors as part of nature, we could consider the swarms of ecologically devastating species they brought (not to mention Honolulu’s eventual skyscrapers and traffic jams) as being as natural as the patches of prehuman forest that still haunt portions of these islands.

If we view modern people as unnatural, should we view the prehistoric Hawaiians as separate from nature as well? Or would it be wiser to employ a more nuanced approach? We could decide, based on their present ecological effects, that some of the species brought to the islands by prehistoric and modern peoples are natural, and others are unnatural and should be removed. However, if we rigidly employed this model, we would at least occasionally find ourselves in the awkward position of eradicating some beloved and long-established Polynesian species while preserving others that recently arrived from ecologically and culturally distant places such as Europe and South America.

Like many Western scientists and environmentalists who come to Hawaii from elsewhere, at first I was largely in the “humans are separate from nature” camp. Many of my colleagues and I were especially entranced by the islands’ prehuman species radiations and coevolutionary interactions, which arguably remain the world’s most spectacular examples of these phenomena. If Charles Darwin had landed in Hawaii rather than the Galápagos, he almost certainly would have devised his theories of evolution and natural selection a lot more quickly! Thus I was more than happy to leave conservation biology’s tangled web of ethical and socioeconomic problems to the philosophers and social scientists. But as I began to see that the relationship between humans and nature was more complex than I had initially perceived, I began to find all those philosophical and political questions at least as interesting and important as the more technical issues associated with my “pure” ecological research.

For example, tropical dry forests are among the most endangered ecosystems in the world in general and within the Hawaiian Islands in particular. The lowland, dry, leeward sides of all the main Hawaiian Islands were once covered by magnificent forests teeming with strange and beautiful species, such as brightly colored, fungi-eating snails and giant flightless geese. Paradoxically, the diversity of these primeval forests was probably created and maintained by rivers of molten lava that destroyed everything in their path as they wound their way down the slopes of the volcanoes and into the sea. Before today’s now-dominant herbaceous nonnative species invaded these islands, the native plant communities apparently did not produce enough understory biomass to carry fires much beyond the lava rivers, so the forests on either side of the flows remained more or less intact. Thus, as each wave of new lava cooled and weathered, it was slowly colonized by the species in the adjacent forests. The result of thousands of years of this dynamic cycle was a mosaic of forests of different ages, with different species assemblages growing sometimes side by side.

Tragically, more than 90 percent of Hawaii’s original dry forests have been destroyed, and many of their most ecologically important species and processes are actually or functionally extinct. For example, most of the native birds and insects that once performed such critical services as flower pollination and seed scarification and dispersal are now gone.

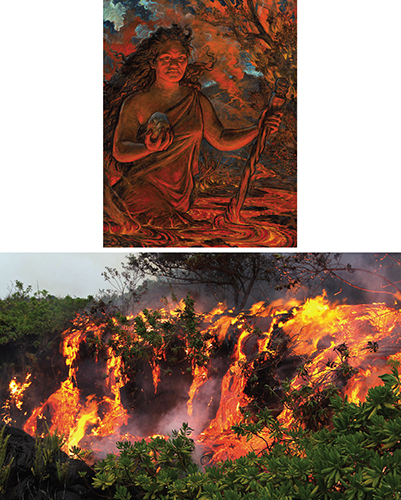

Photograph at top courtesy of Virginia Thaxton. Photograph at bottom: Jack Jeffrey.

Various efforts to conserve the remaining fragments of this once vast and culturally important ecosystem on the island of Hawai’i eventually coalesced into a working group comprising local residents; native Hawaiians, including Hannah Springer, who initiated the group’s formation; scientists such as myself; and representatives from more than 25 different agencies. As we set out to physically restore a highly degraded remnant dry forest, we encountered a series of philosophical conundrums that in many respects were even more problematic than the blanket of fire-promoting African fountain grass (Pennisetum setaceum) that dominates this region of the island. For instance, although kukui (Aleurites moluccana) is the state tree of Hawaii, it is a nonnative species that was deliberately brought over by the early Polynesians. They had uses for many of the tree’s parts, including its oil-rich nuts, which they burned to provide light. Some of us wanted to explore the efficacy of planting kukui to suppress fountain grass and create favorable nurse environments for native species. Others were vehemently against planting kukui or any nonnative species; some were willing to try kukui and other Polynesian species; and a few were game to experiment with any promising species (including nonnative animals) regardless of their geographic origin.

We had similar philosophical disagreements over what to do about the existing introduced species in this area. For example, in the 1950s, territorial foresters planted several different nonnative tree species within the remnant forest we were trying to restore. One of these specimens, a black cypress (Callitris endlicheri), eventually grew taller than all the other trees and became an important regional landmark. Some members of the working group believed that every nonnative species in the forest, including that cypress, should be eradicated as quickly as possible. Others felt that because this tree was culturally important and ecologically harmless (it had failed to regenerate since it was planted), killing it would be unnecessary, insensitive and counter to our larger mission. Such disagreements often led to yet more passionate debates over exactly what our larger mission was or should be.

Photograph courtesy of Forest and Kim Starr.

As if the situation weren’t complex enough, while we were arguing over these issues, someone discovered that Hawaii’s largest native insect, the beautiful Blackburn’s sphinx moth (Manduca blackburni), appeared to be using tree tobacco (Nicotiana glauca), an invasive South American tree in the nightshade family. Like many other native insects, the rarity of this endangered moth is due in part to the rarity of its host plant, which in this case is yet another endangered native dry-forest species that is also in the nightshade family. Should we leave the incipient stand of tree tobacco in our remnant forest alone, or should we destroy it before it became uncontrollable?

Even if we had wanted to leave the resolution of such conflicts to other members of the working group, my scientific colleagues and I would still have had to wrestle with a never-ending stream of ethical dilemmas. In one experiment, an endemic vine appeared out of nowhere, climbed the poles supporting one of our shade-cloth structures, and significantly reduced the light in several of the underlying plots. Should we cut it down, or leave it alone because it was native? What about the tomato vines (which had apparently germinated from the remains of a volunteer’s lunch) that were taking over some of our unmanipulated control plots? Should we destructively harvest all of our research plants (several of which were critically endangered) or spare some for restoration purposes?

Things only became more heated when we needed the approval of the working group to do our science. For instance, anecdotal evidence suggested that bulldozing might be an effective technique for removing fountain grass and establishing native species. However, when we proposed incorporating a dozing treatment into our next round of experiments, members’ responses ranged from “Great idea!” to “Over my dead body!”

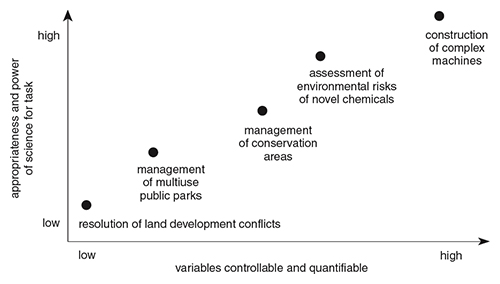

Many people believe that such conflicts should be resolved by the best available science. Yet I found repeatedly that even when opposing stakeholders agree to base their decisions on the results of a particular experiment or research program, they rarely agree on how to implement, interpret and apply this research in the messy real world. In this instance, although we were ultimately allowed to perform some small-scale dozing experiments, none of the members of the working group (or the outside experts they consulted) who had initially been against using dozers were swayed by what turned out, from our perspective, to be some very promising results. In a 2006 Macroscope column in American Scientist, Daniel Sarewitz eloquently describes this phenomenon: “Even when a disagreement seems to be amenable to technical analysis, the nature of science itself usually acts to inflame rather than quench the debate.” He continues, “‘More research’ is often prescribed as the antidote, but new results quite often reveal previously unknown complexities, increasing the sense of uncertainty and highlighting the differences between competing perspectives.”

Rather than being the product of a logical, step-by-step decision-making process, the resolutions to our working group conflicts largely came from a combination of rough democracy and idiosyncratic variables, such as the weather, the ebb and flow of funding opportunities, and who was doing the actual work in the field that week. Such was the case within Hawaii’s conservation and natural resource management communities in general. Similarly, every time I thought I had finally settled on an intellectually robust framework for understanding the ecology of dry forests or resolving my applied problems, some new biological or ethical curveball forced me to rethink my science and my actions. Thus sometimes I killed all my experimental native plants and weeded those pesky tomato vines, and sometimes I didn’t.

Several scholars have come to the ironic conclusion that all of our mental images of nature are necessarily unnatural human projections. Their point is not that the natural world doesn’t exist or isn’t worth fighting for, but that our understanding of and relationship to nature is more a product of our cultural and personal values than of any external physical reality. For example, we may like to think that the landscapes, plants and animals we encounter within our national parks are wild or natural. However, the “preservation” of such areas was an entirely human endeavor that was motivated by a subset of a particular culture that was defining a new vision of nature. Moreover, even in the increasingly rare instances—if there are any—in which we haven’t already altered the living and nonliving components of these parks, our perception of them is always intimately intertwined with the human world.

Painting at top by Arthur Johnsen. Photograph by Leigh Hilbert Photography.

For instance, the early Polynesians believed that the goddess Pele lived in what is now Hawai’i Volcanoes National Park, and that Kilauea’s eruptions were an expression of Pele’s longing for her lost love, Lohiau, a human chief. What they saw and felt when they visited this sacred area was obviously quite different than the experiences of, say, a modern volcanologist from the United States or an ecotourist from Japan. As the anthropologist Richard Nelson concluded in his 1983 book Make Prayers to the Raven: A Koyukon View of the Northern Forest, “Reality in nature is not just what we see, but what we have learned to see.”

Jack Jeffrey

Humanity’s perception of and reaction to our own species’ transformation of nature has also varied considerably across different cultures, places and times. For example, throughout New England’s precolonial period, most Europeans were proud of the ways their culture “tamed” what they perceived as an unruly and evil landscape. As early as 1653, historian Edward Johnson approvingly viewed the transformation of a “remote, rocky, barren, busy, wild-woody wilderness” into a “second England for fertilness” as a product of divine guidance and inspiration. As more time passed, however, some of the descendants of those early Europeans began to see the ecological changes they had wrought more as a fall from the Garden than the planting of one. In 1855, Henry David Thoreau famously lamented:

When I consider that the nobler animals have been exterminated here … I cannot but feel as if I lived in a tamed, and, as it were, emasculated country…. I take infinite pains to know all the phenomena of the spring, for instance, thinking that I have here the entire poem, and then, to my chagrin, I hear that it is but an imperfect copy that I possess and have read, that my ancestors have torn out many of the first leaves and grandest passages, and mutilated it in many places.

Yet the ecological situation in Thoreau’s New England was far more complex than he realized. We now know that many of the missing components of his “entire poem” had actually been created and maintained by the extensive earlier activities of Native Americans. We can only guess at how these indigenous peoples may have altered the “pristine wilderness” they first encountered.

Photograph at top courtesy of the author. Photograph at bottom, Jack Jeffry.

It can be tempting to believe that, although the insights about nature gleaned from so-called nonscientific cultures and perspectives may be interesting, modern Western science is the best or only way to discover the truth. Thus our science has proven that volcanic eruptions are generated by underlying geologic processes, not the tears of mythical goddesses. However, if the future is anything like the past, our scientific understanding of volcanoes and everything else will eventually change so drastically that one day people may consider our present understanding to be quaint and even mythological. Indeed, as the ecological historian Carolyn Merchant observed in her 1989 book Ecological Revolutions: Nature, Gender, and Science in New England, science itself may be viewed as “an ongoing negotiation with nonhuman nature for what counts as reality. Scientists socially construct nature, representing it differently in different historical epochs.”

Many scholars have also shown that the history of science has been far more complex than the cartoonish depiction of scientists standing on the shoulders of giants and marching toward truth—a depiction we often subscribe to, at least implicitly. In his classic Structure of Scientific Revolutions, Thomas Kuhn provided numerous examples of our “persistent tendency to make the history of science look linear or cumulative, a tendency that even affects scientists looking back at their own research.” Kuhn argued that although science, much like the process of biological evolution, may be seen as moving from “primitive” states, it should not be seen as moving toward anything in particular. In addition, he persuasively showed how, once scientists become sufficiently invested in a particular paradigm, they often refuse to adopt a new and potentially better one. They appear to have the unshakable belief that “nature can be shoved into the box the [old] paradigm provides.” Thus, as the great German physicist Max Planck wryly observed, “A new scientific truth does not triumph by convincing its opponents and making them see the light, but rather because its opponents eventually die.”

Jack Jeffrey

We are also quite capable of mistaking the scientific paradigms we project onto nature for reality itself. For example, we often forget that our cherished taxonomic divisions—the kingdoms, families, and even species with which we compartmentalize the diversity of life—are in fact ever-changing mental constructs that do not actually exist in nature. I realized this one day while collecting botanical data with some colleagues. Because we had each separately kept abreast of recent systematic revisions to that particular flora, we knew several of the same plants by different Latin names and groupings. After a few minutes of increasingly exasperating confusion, one of our technicians finally said, “Why don’t we just use the common Hawaiian names—they’re more accurate and they never change!”

Illustration by Tom Dunne.

Our natural resource management paradigms also change with the political winds and with our ever-evolving visions of nature. For example, the dominant scientific view up to the mid-20th century, fueled partly by the historically close relationship between conservation and the “science” of eugenics, was that Hawaii’s native species and ecosystems were inferior. They should thus be “invigorated,” scientists believed, by stronger and fitter species from “more advanced” cultures, such as Europe and the United States. The emphasis subsequently shifted toward the islands’ native (prehuman) biodiversity—and the pendulum now appears to be swinging toward greater acceptance and even appreciation both of Hawaii’s culturally important (prehistoric) plants and animals and of its modern so-called novel ecosystems—mixtures of native and nonnative species that never existed in the past. The interactions between people and the environment in today’s ecologically homogenized world have become so complex that determining which, if any, aspects of nature are “wild” has become increasingly difficult.

Attempts to determine whether one human activity is necessarily more natural than another often result in arbitrary distinctions as well. For instance, contemporary back-to-the-land farmers are often motivated in part by a desire to be closer to nature and live a more natural life. Like conservation, agriculture is yet another human invention that blurs the boundaries between what is and is not natural. To practice their trade, even the purest organic farmers in the United States must either create unnatural clearings or use the ones established by their predecessors. If they are lucky, abundant populations of nonnative earthworms will improve their soil, and nonnative honeybees will pollinate their intensively bred exotic crops. Draft horses, used by those farmers who are most intent on following earlier practices, were originally introduced to the New World by Christopher Columbus, although there had been horses in North America until 12,000 years ago when, archaeologists speculate, newly arriving humans killed them off. Even if they use horses instead of tractors, at least some farmers still end up purchasing “natural” soil amendments manufactured in other countries using highly industrialized, fossil fuel–driven processes.

Assessing what is natural is further complicated by the fact that we are increasingly discovering that our ancestors transformed nature far more than scientists formerly assumed. For instance, we now believe that some of the world’s most biologically diverse and “pristine” tropical rain forests are literally growing out of the ruins of once extensive and sophisticated pre-European civilizations. Recent research has even suggested that Columbus’s New World voyage set in motion a chain of events that ultimately resulted in large-scale, human-induced climate change several centuries before the postindustrial global warming that McKibben viewed as the end of nature.

Photograph courtesy of Robert J. Cabin.

If we see humans and nature as mutually exclusive entities, then nature is now surely dying or already dead. But as people in Hawaii and across the planet are demonstrating, viewing humans and nature as inseparable can help motivate us to both preserve our remaining biodiversity and create more harmonious relationships between people and nature—however one perceives and defines it. Ironically, McKibben’s contemporary organization, 350.org, which is dedicated to building a global grassroots movement to solve today’s dire climate crisis, provides a fine example of how scientists, environmentalists, indigenous peoples, religious institutions and citizens from around the world can work together in a democratic, pluralistic and inclusive manner that honors both science and other ways of conceptualizing, understanding and interacting with nature.

Click "American Scientist" to access home page

American Scientist Comments and Discussion

To discuss our articles or comment on them, please share them and tag American Scientist on social media platforms. Here are links to our profiles on Twitter, Facebook, and LinkedIn.

If we re-share your post, we will moderate comments/discussion following our comments policy.