The Toxicity of Recreational Drugs

By Robert S. Gable

Alcohol is more lethal than many other commonly abused substances

Alcohol is more lethal than many other commonly abused substances

DOI: 10.1511/2006.59.206

The Shuar tribes in Ecuador have for centuries used native plants to induce religious intoxication and to discipline recalcitrant children. By comparison, most North Americans know little about the mood-altering potential of the wild vegetation around them. And those who think they know something on this subject are often dangerously ignorant. Over a three-week period in 1983, for example, 22 Marines wanting to get high were hospitalized because they ate too many seeds of the jimsonweed plant (Datura stramonium), which they found growing wild near their base, Camp Pendleton in southern California.

Top, David Longstreath/AP Photo. Bottom, Universal/The Kobal Collection.

A dozen seeds of jimsonweed contain about 1 gram of atropine, 10 grams of which can cause nausea, severe agitation, dilation of pupils, hallucinations, headache and delirium. Tribal groups in South America refer to datura plants as the "evil eagles." Of approximately 150 hallucinogenic plants that are routinely consumed around the world, those with atropine have the most pernicious reputation—something these Marines discovered the hard way.

The easier way to learn about the relation between the quantity of a substance taken and the resulting level of physiological impairment is through careful laboratory study. The first example of such an exercise, in 1927, used rodents. Research toxicologist John Trevan published an influential paper that reported the use of more than 900 mice to assess the lethality of, among other things, cocaine. As he and others have since found, a substance that is tolerated or even beneficial in small quantities often has harmful effects at higher levels. The amount of a substance that produces a beneficial effect in 50 percent of a group of animals is called the median effective dose. The quantity that produces mortality in 50 percent of a group of animals is termed the median lethal dose.

Laboratory tests with animals can give a general picture of the potency of a substance, but generalizing experimental results from, say, mice to humans is always suspect. Thus toxicologists also use two other sources of information. The first is survey data collected from poison-control centers, hospital emergency departments and coroners' offices. Another consists of published clinical and forensic reports of fatalities or near-fatalities.

But these sources, like animal studies, have their limitations. Simply tallying the number of people who die or who show up at emergency rooms is, by itself, meaningless because the number of such incidents will be influenced by the total number of people using a particular substance, something that is impossible to know. For example, atropine is more toxic than alcohol, but more deaths will be reported for alcohol than for atropine because so many more people get drunk than ingest jimsonweed. Furthermore, most overdose fatalities involve the use of two or more substances (usually including alcohol), situations for which the overall toxicity is largely unknown. In short: When psychoactive substances are combined, all bets are off.

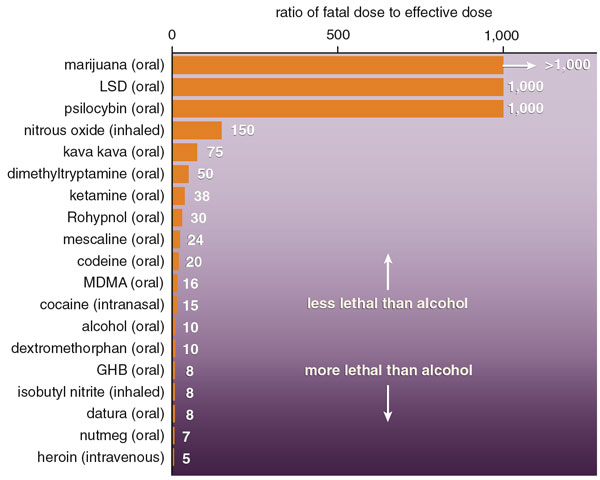

How then does one gauge the relative risks of different recreational drugs? One way is to consider the ratio of effective dose to lethal dose. For example, a normally healthy 70-kilogram (154-pound) adult can achieve a relaxed affability from approximately 33 grams of ethyl alcohol. This effective dose can come from two 12-ounce beers, two 5-ounce glasses of wine or two 1.5-ounce shots of 80-proof vodka. The median lethal dose for such an adult is approximately 330 grams, the quantity contained in about 20 shots of vodka. A person who consumes that much (10 times the median effective dose), taken within a few minutes on an empty stomach, risks a lethal reaction. And plenty of people have died this way.

As far as toxicity goes, such deaths are quite telling. Indeed, autopsy reports from cases of fatal overdose (whether from alcohol or some other substance) provide key information linking death and drug consumption. But coroners are generally hard-pressed to determine the size of the dose because significant redistribution of a drug often occurs after death, typically from tissues of solid organs (such as the liver) into associated blood vessels. As a result, blood samples may show different concentrations at different times after death. Even if investigators had a valid way to measure the concentration of a lethal drug in a decedent's blood, they would still need to work backward to make a retrospective estimate of the quantity of the drug consumed. Although the approximate time of death is often known, the time the drug was taken and the rate at which it was metabolized are not so easily established. Lots of guesswork is typically involved. Obviously, people who want clean answers should not seek information from corpses.

Despite these difficulties, it is evident that there are striking differences among psychoactive substances with respect to the lethality of a given quantity. The way a substance is absorbed is also a critical factor. The common routes of consumption, from the least toxic to the most toxic (in general), are: eating or drinking a substance, depositing it inside the nostril, breathing or smoking it, and injecting it into a vein with a hypodermic syringe. So, for example, smoking methamphetamine (as is done with the increasingly popular illicit drug "crystal meth") is more dangerous than ingesting it.

Once a drug enters the body, physiological reactions are determined by many factors, such as absorption into various tissues and the rates of elimination and metabolism. Individuals vary enormously in how they metabolize different substances. One person's sedative can be another person's poison. This variability alone introduces unavoidable ambiguities in estimating effective and lethal doses. Still, the wide range between different substances suggests that they can be rank-ordered with reasonable confidence. One can be quite certain, for example, that the risk of death from ingesting psilocybin mushrooms is less than from injecting heroin.

The most toxic recreational drugs, such as GHB (gamma-hydroxybutyrate) and heroin, have a lethal dose less than 10 times their typical effective dose. The largest cluster of substances has a lethal dose that is 10 to 20 times the effective dose: These include cocaine, MDMA (methylenedioxymethamphetamine, often called "ecstasy") and alcohol. A less toxic group of substances, requiring 20 to 80 times the effective dose to cause death, include Rohypnol (flunitrazepam or "roofies") and mescaline (peyote cactus). The least physiologically toxic substances, those requiring 100 to 1,000 times the effective dose to cause death, include psilocybin mushrooms and marijuana, when ingested. I've found no published cases in the English language that document deaths from smoked marijuana, so the actual lethal dose is a mystery. My surmise is that smoking marijuana is more risky than eating it but still safer than getting drunk.

Alcohol thus ranks at the dangerous end of the toxicity spectrum. So despite the fact that about 75 percent of all adults in the United States enjoy an occasional drink, it must be remembered that alcohol is quite toxic. Indeed, if alcohol were a newly formulated beverage, its high toxicity and addiction potential would surely prevent it from being marketed as a food or drug. This conclusion runs counter to the common view that one's own use of alcohol is harmless. That mistaken impression arises for several reasons.

First, the more frequently we experience an event without a negative outcome, the lower our level of perceived danger. For example, most of us have not had a life-threatening traffic accident; thus, we feel safer in a car than in an airplane, although we are 10 to 15 times more likely to die in an automobile accident than in a plane crash. Similarly, most of us have not had a life-threatening experience with alcohol, yet statistics show that every year about 300 people die in the United States from an alcohol overdose, and for at least twice that number of overdose deaths, alcohol is considered a contributing cause.

Second, having a sense of control over a risky situation reduces fear. People drinking alcoholic beverages believe that they have reasonably good control of the quantity they intend to consume. Control of the dose of alcohol is indeed easier than with many natural or illicit substances where the active ingredients are not commercially standardized. Furthermore, alcohol is often consumed in a beverage that dilutes the alcohol to a known degree.

Consider the following: The stomach capacity of an average adult is about 1 liter; therefore, a person is unlikely to overdose after drinking beer containing 5 percent alcohol. Compare this situation to GHB (a depressant originally marketed in health food stores as a sleep aid), where stomach capacity does not place much of a limit on consumption because the effective dose is only one or two teaspoonfuls. No wonder that more than 50 percent of novice users of GHB have experienced an overdose that included involuntary loss of consciousness.

Another reason that alcohol is often thought to be safe is that popular media do not routinely report fatalities from alcohol overdoses. Deaths are usually considered newsworthy when they involve a degree of novelty. Thus a fatality caused by LSD or MDMA is thought to be more interesting than one caused by alcohol.

A simpleminded look at the ratio of effective to lethal doses ignores many complications, some of which are well recognized, some rather subtle. Take, for example, the fact that danger generally increases with repetitive consumption. High blood levels of a drug, without rest periods between use, tend to heighten risk, because the affected organs do not have sufficient time to recover. Studies of MDMA use, for example, show that relatively small repeated doses result in disproportionately large increases of MDMA in blood plasma. Cocaine is the substance that induces the highest rate of repetitive consumption as a result of mood change. Heroin and alcohol come in second and third. Also, the tendency of a user to take a "booster" dose prematurely is greater with substances that require an hour or more to provide the full psychological effect—during the interim the user often assumes that the original dose was not sufficiently potent. This phenomenon routinely occurs with dextromethorphan (found in cough medicines), GHB and MDMA.

David Schneider

Overdose quantities that are based on acute toxicity also do not take into account the probability that an individual will become addicted. This probability can be cast as a drug's capture ratio: Of the people who sample a particular substance, what portion will become physiologically or psychologically dependent on the drug for some period of time? Heroin and methamphetamine are the most addictive by this measure. Cocaine, pentobarbital (a fast-acting sedative), nicotine and alcohol are next, followed by marijuana and possibly caffeine. Some hallucinogens—notably LSD, mescaline and psilocybin—have little or no potential for creating dependence.

Finally, a comparison of overdose fatalities does not take into account cognitive impairments and risky or aggressive behaviors that sometimes follow drug use. And as most people are well aware, a substantial proportion of violent confrontations, rapes, suicides, automobile accidents and AIDS-related illnesses are linked to alcohol intoxication.

Despite the health risks and social costs, consciousness-altering chemicals have been used for centuries in almost all cultures. So it would be unrealistic to expect that all types of recreational drug use will suddenly cease. Self-management of these substances is extremely difficult, yet modern Western societies have not, in general, developed positive, socially sanctioned rituals as a means of regulating the use of some of the less hazardous recreational drugs. I would argue that we need to do that. The science of toxicology may provide one step in that direction, by helping to teach members of our society what a lot of tribal people already know.

Click "American Scientist" to access home page

American Scientist Comments and Discussion

To discuss our articles or comment on them, please share them and tag American Scientist on social media platforms. Here are links to our profiles on Twitter, Facebook, and LinkedIn.

If we re-share your post, we will moderate comments/discussion following our comments policy.