The Adaptable Gas Turbine

By Lee S. Langston

Whether creating electricity or moving planes, this engine continues to inspire innovation

Whether creating electricity or moving planes, this engine continues to inspire innovation

DOI: 10.1511/2013.103.264

Turbines have been around for a long time—windmills and water wheels are early examples. The name comes from the Latin turbo, meaning vortex, and thus the defining property of a turbine is that a fluid or gas turns the blades of a rotor, which is attached to a shaft that can perform useful work. Hydrocarbon-fueled turbines, however, are one of the youngest energy conversion devices: Their first use in either generating electricity or powering jet aircraft flight took place in 1939. Through the efforts of many thousands of engineers in the intervening 70 years or so, such gas turbines have come to dominate aircraft propulsion and, with their now-unmatched thermal efficiency and low cost, are the superstars of electric power plants. With energy a central concern in modern society, gas turbine technology continues to be innovative.

Much of my efforts as a mechanical engineer, both in industry and academia, have been guided by the first law of thermodynamics (stated in the principle of the conservation of energy): Energy is neither created nor destroyed, but can be changed in form. The “changed in form” part of the law is what many mechanical engineers do, as they research and develop energy conversion devices. An example of this conversion is transforming heat (say, from the combustion of a hydrocarbon fuel) into motive power (such as a jet powered airplane) or electricity. Devices that perform this transformation are called prime movers.

Illustration by Tom Dunne.

The major modern-day prime movers convert heat supplied by nuclear or chemical reactions into useful forms of energy. The gas turbine, co-invented by Hans von Ohain, Frank Whittle and the engineers at the Swiss firm Brown, Boveri & Cie, succeeded the steam engine, realized in 1769 by Thomas Newcomen and James Watt; the spark ignition engine of Nikolaus Otto from 1876; the compression ignition engine of Rudolf Diesel from 1884 and the steam turbine of Charles Parsons from 1897.

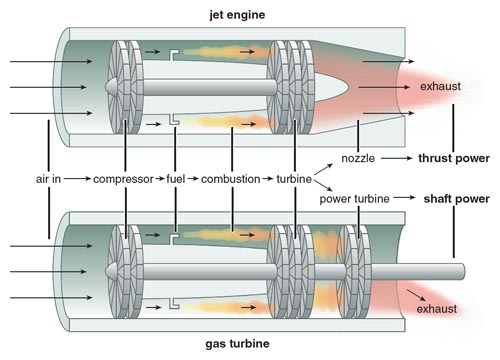

The name gas turbine is somewhat misleading, for it implies a simple turbine that uses gas as a working fluid. Actually, a gas turbine has a compressor to draw in and compress gas (usually air), a combustor (or burner) to add combustive fuel (usually a hydrocarbon liquid or gas) to heat the compressed gas, and a turbine (or expander) to extract power from the hot gas flow with its rotation of the turbine blades.

Because the origin of the gas turbine lies in both the electric power field and aviation, there has been a profusion of other names for the gas turbine. For land and marine applications the gas turbine moniker is most common, but it is also called a combustion turbine, a turboshaft engine and sometimes a gas turbine engine. For aviation applications it is usually called a jet engine, and various other names (depending on the particular aviation configuration or application) such as jet turbine engine, turbojet, turbofan, fanjet and turboprop or prop jet (if it is used to drive a propeller). The compressor-combustor-turbine part of the gas turbine is commonly called the gas generator.

In an aircraft gas turbine, all of the turbine power is used to drive the compressor (which may also have an associated fan or propeller). The gas flow leaving the turbine is then accelerated to the atmosphere through an exhaust nozzle to provide thrust or propulsion power. Gas turbine or jet engine thrust power is equal to the momentum increase in the mass flow from engine inlet to exit, multiplied by the flight velocity. The actual thrust force produced in the engine (and pulling the plane forward) is the summation of all the axial components of pressure forces on the internal surfaces of the engine exposed to the gas path flow.

A jet engine can be small enough to be handheld and produce a few pounds of thrust (1 pound of thrust is equivalent to 4.45 newtons of force) to be used on model airplanes or military drones. (The retired Swiss pilot Yves Rossy, nicknamed “Jetman,” attached four such small jet engines—each producing 50 pounds of thrust or about 223 newtons—to a back-mounted wing and flew across the English Channel in 2008 and over the Grand Canyon in 2011.) On modern commercial jet aircraft, gas turbines are typically in the range of 30,000 pounds of thrust (or 136,000 newtons), with the largest currently at about 100,000 pounds of thrust (445,000 newtons) on Boeing’s long-range 777 airplanes.

Image courtesy of Pratt & Whitney.

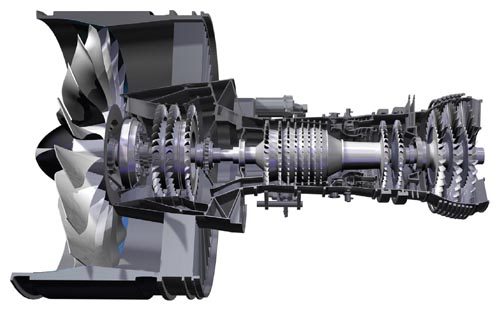

The jet engine shown in the figure above is a turbofan engine, with a larger-diameter compressor-mounted fan. Thrust is generated by air passing through the fan alone (called bypass air) and through the gas generator itself. The combination of mechanisms greatly increases the fuel efficiency of the engine. With a large frontal area to pull in a higher mass of air (with the trade-off that the configuration does engender higher aerodynamic drag forces at cruising flight velocities), the turbofan engine generates peak thrust at takeoff speeds. It is therefore most suitable for commercial aircraft, which need most of their lift to get off the ground, not to maneuver once in the air. In contrast, a turbojet does not have a fan and generates all of its thrust from air that passes through the gas generator. Turbojets have smaller frontal areas (and thus lower drag at high flight velocities) and generate peak thrusts at high speeds, making them most suitable for fighter aircraft that travel at much higher velocities than commercial craft.

In nonaviation gas turbines, only part of the turbine power is used to drive the compressor. The remainder is used as output shaft power to turn an energy conversion device, such as an electrical generator, or to compress natural gas in a pipeline so it can be transported. Shaft power land-based gas turbines can get very large (with an output as high as 375 megawatts, enough to power about 300,000 homes). The unit shown in the figure at right is called an industrial or frame machine. It is constructed for ruggedness and long life, so weight is not a major factor as it is with a jet engine. Typically frame machines are designed conservatively but have made use of technical advances in jet engine development when it has made sense to do so.

Photograph courtesy of Siemens AG.

Lighter-weight gas turbines derived from jet engines and used for nonaviation applications are called aeroderivative gas turbines. Aeroderivatives are used to drive natural gas pipeline compressors, power ships and produce electric power. They are used particularly to provide peaking and intermediate power for electric utilities, because they can start up quickly. Peaking power supplements a utility’s normal output during high-demand periods, such as summer air conditioning in major cities.

The gas turbine has some design advantages over other power systems. It is capable of producing large amounts of useful power for a relatively small size and weight. Because motion of all its major components involves pure rotation (there is, for instance, no reciprocating motion as in a piston engine), its mechanical life is long and the corresponding maintenance cost is relatively low. However, during its early development, the deceptive simplicity of the gas turbine caused problems, until aspects of its fluid mechanics, heat transfer and combustion were better understood. In the words of Edward Taylor, the first director of the MIT Gas Turbine Laboratory, early gas turbine compressor designs foundered on a rock, and the rock was stall. Stall is the sudden blockage and even reversal of engine flow, caused when fluid separated away from the compressor airfoil surfaces instead of flowing evenly over them. Taylor paraphrased P. T. Barnum’s words to describe two kinds of stall: You can operate a compressor so it stalls all of the blades some of the time (called surge) or some of the blades all of the time (called rotating stall). It took much early research and development to avoid such stall conditions.

Although a gas turbine must be started by some external means (a small external motor or other source, such as another gas turbine), it can be brought up to full load (peak output) conditions in minutes, in contrast with a steam turbine plant whose startup time is measured in hours.

Gas turbines can also use a variety of fuels. Natural gas is commonly used in land-based gas turbines, whereas light distillate (or kerosene-like) oils power aircraft jet engines and marine gas turbines. Diesel oil or specially treated residual oils (such as biodiesel) can also be used, as well as combustible gases (such as methane) derived from blast furnaces, refineries, landfills, sewage and gasification of solid fuels such as coal, wood chips and bagasse (the crushed stalks of sugarcane or sorghum). Some recent work in South Africa on a type of nuclear power plant called a pebble bed reactor (which uses tennis ball–sized spheres of graphite embedded with fissile material) provided helium gas to power a type of turbine that has a closed cycle, meaning it uses a gas preheated by an external source that is recirculated through the system.)

An additional advantage of gas turbines is that the usual working fluid is atmospheric air, and the machine does not require liquid cooling—an important consideration in many parts of the world, where cooling water is in short supply.

In the early days of its development, one of the major disadvantages of the gas turbine was its lower efficiency (hence higher fuel usage) when compared to other engines and steam turbine power plants. However, over the past 70 years, continuous engineering development has pushed the thermal efficiency (18 percent for the 1939 Brown Boveri gas turbine) to present levels of about 45 percent for simple cycle operation. Efficiencies can reach over 60 percent for combined-cycle operations, where exhaust gases are put to additional use.

It is now hard to remember when the aviation gas turbine—the jet engine—was not part of aircraft flight. Before jet engines, an aviation piston engine manufacturer could expect to sell 20 to 30 times the original cost of the engines in aftermarket parts. With the advent of the jet engine, this aftermarket figure dropped to three to five times the original cost (an important reduction that made air travel affordable and reliable, and airlines profitable, although engine manufacturers have had to alter their business models). In recent years, technology and market demands have resulted in even longer lasting engine components, dropping the aftermarket figure to increasingly lower levels.

A well-managed airline will try to keep a jet-powered plane in the air as much as 18 hours a day, 365 days a year. If well maintained, the airline expects the engines to remain in service and on the wing for 15,000 to 30,000 hours of operation, depending on the number of takeoffs and landings experienced by the plane. After this period, the jet engine will be taken off and overhauled, usually with replacement of parts that experience heating, such as the combustor and turbine. (Currently the in-flight shutdown rate of a jet engine is less than 1 per 100,000 flight hours. In other words, on average, an engine fails in flight once every 30 years.)

Aircraft jet engines make up about 25 percent of the cost of the airplane. In 2011 the worldwide aviation gas turbine market amounted to $32 billion, of which $27 billion was for commercial aircraft, with the remainder for military applications. Currently there are about 19,400 airplanes in the worldwide air transport fleet. Both major airplane manufacturers, Boeing in the United States and Airbus in Europe, project that there will be 34,000 aircraft in world fleets by 2030.

This promising market is stimulating jet engine development for commercial airlines, with an emphasis on fuel economy. Currently, 40 to 60 percent of airline operating expenses are jet fuel costs. The Pratt & Whitney turbofan engine shown in the second figure is currently being developed for new, single-aisle, 90- to 200-passenger aircraft. This engine has a hub-mounted gearing system that drives the front mounted fan at lower speeds, permitting as much as 16 percent less fuel consumption and much reduced engine noise. Later, the geared-fan technology may be applied to higher-thrust engines for larger airplanes.

Although military jet engines represent a smaller segment of the gas turbine market, the technology developed there has historically resulted in benefits for commercial aviation. The new U.S. F135 Joint Strike Fighter engine, at 40,000 pounds of thrust, is a case in point. It powers three variants of aircraft: An Air Force fighter that takes off conventionally, a carrier-based Navy jet and a short takeoff/vertical landing aircraft for the Marines.

Temperatures in the Joint Strike Fighter engine run 3,600 degrees Fahrenheit (1,982 degrees Celsius). How do the cobalt-nickel alloy turbine airfoils survive such running conditions? The vanes and blades are cooled to some eight-tenths to nine-tenths of their alloy melting temperatures (2,200 to 2,600 degrees Fahrenheit). Each high- temperature turbine airfoil is formed from an elaborate casting to accommodate the intricate internal passages and surface hole patterns necessary to channel and direct cooling air (bled from the compressor) within and over its external surfaces. An error in hole location or cooling air pressure ratios could cause airfoil gas path inhalation rather than cooling exhalation, which at such high temperatures would be catastrophic. The cooling design is based on some 30 years of research and unequivocally pushes forward the state-of-the-art of turbine performance and durability.

In the past 30 years, advances in non-aviation technology have almost doubled the thermal efficiency of new gas turbine electric power plants. In 2011, the worldwide market for nonaviation gas turbines came in at $16 billion, most of it for new electrical plants. Modern gas turbine combined-cycle power plants produce electric power at levels as high as half a gigawatt, with thermal efficiencies that now exceed the 60 percent mark—almost twice what I learned about as an undergraduate mechanical engineering student.

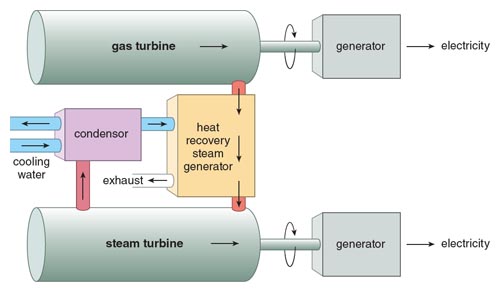

Illustration by Tom Dunne.

A combined-cycle gas turbine power plant uses a gas turbine (usually fueled by natural gas) to drive an electrical generator. The hot exhaust is then used to produce steam in a heat exchanger (called a heat recovery steam generator) to supply a steam turbine whose useful work output provides the means to generate more electricity. (If the steam is used instead to heat buildings, the unit would be called a cogeneration plant.) A good efficiency value for modern gas turbines is 40 percent, whereas a steam turbine at typical combined-cycle conditions is about 30 percent. Using the first law of thermodynamics and the definition of thermal efficiency, the combined efficiency of the two is about 58 percent, greater than either of the individual devices alone.

The heart of the combined-cycle plant (or more accurately, the combined power plant, because the thermodynamic cycles aren’t combined) is the gas turbine with its gas exhaust temperature, typically at about 1,000 degrees Fahrenheit (or 538 degrees Celsius), sufficient to produce steam to power the steam turbine. The Siemens 375 megawatt gas turbine shown in the third figure is the center of a new 578-megawatt combined-cycle gas turbine plant in Irsching, Germany. On May 19, 2011, Siemens announced it had reached a thermal efficiency of 60.75 percent, which probably makes it the most efficient heat engine ever operated.

“I sell here, sir, what all the world desires to have—POWER.” These were the words of early British industrialist Matthew Boulton to James Boswell, quoted in Boswell’s 1791 book The Life of Samuel Johnson. Boulton and his partner, Scottish engineer James Watt, manufactured the first steam engines. Their firm went out of business long ago, but the world’s need for power has multiplied many times over since Boulton met Boswell.

Such increasing need for power is being fulfilled by gas turbines, in both flight propulsion and electrical generation. One can safely predict that the gas turbine will increase its role as a prime mover, as engineers continue to improve its performance and find new uses for it.

Click "American Scientist" to access home page

American Scientist Comments and Discussion

To discuss our articles or comment on them, please share them and tag American Scientist on social media platforms. Here are links to our profiles on Twitter, Facebook, and LinkedIn.

If we re-share your post, we will moderate comments/discussion following our comments policy.