Acquiring Literacy Naturally

By Dominic Massaro

Behavioral science and technology could empower children to learn to read

Behavioral science and technology could empower children to learn to read

DOI: 10.1511/2012.97.324

When we think about how we learn language, we think of speech as somehow more fundamental than reading. Most children hear speech prenatally and participate in a world of spoken language. Entering the terrible twos, they have already heard about 1,000 hours of speech. Learning to read follows a very different trajectory.

In the United States, the typical youngster receives specific lessons in kindergarten through third grade, designed to teach letters, phonics, decoding words and phrases, and finally reading for meaning. Most reading experts believe that children must be five or six years old before they can begin to pick up reading and that they cannot succeed without a mastery of spoken language.

Many of us associate reading with literacy, although the term is now used to describe a variety of knowledge domains, such as computer literacy. Reading literacy is often defined as the ability to use written language to function seamlessly in a literate culture, to pursue goals independently and to acquire knowledge required for a successful life. Although there are degrees of literacy, a minimum requirement is to read fluently with understanding. Even with hours of instruction, however, a significant number of children are delayed or never succeed in achieving this milestone. The latest American National Assessment of Adult Literacy revealed that 30 million people in the United States have no more than the most simple and concrete literacy skills, which are insufficient for typical daily living, and 63 million are functionally illiterate. They cannot comprehend newspapers and books and don’t have the ability to understand documents required for citizenship, such as voting ballots. The cost of illiteracy as well as the huge cost of formal literacy instruction is one of the major social and financial burdens on societies. A possible contributing factor to this situation is that reading experience is delayed until schooling begins.

There are simple explanations for why reading—at least until now—has been considered speech’s struggling sibling. Reading made its appearance less than 6,000 years ago, whereas speech might be about 10 times older. Written language isn’t as flexible as spoken language. Our ancestors had to adventure outside their caves to survive and could communicate by speech and gesture. Only upon returning could they represent their experiences in some permanent visual form, as in France’s Paleolithic cave paintings as shown in Figure 1.

Image from Corbis.

Communicating by visual representations has seen several revolutions since the cave paintings were created, notably the inventions of written language and the printing press. Undeniably, we are now also in the midst of another revolution in how we interact with print. I’m constantly impressed by the ubiquity of our interactions with small mobile screens. As a people, we have adapted to focusing at arm’s length to read the latest e-mail, instant message, Twitter or Facebook update. This holds true not just for adolescents but for every generation. Print plays an increasing role in our daily lives—yet that role constantly evolves. We no longer cuddle up with a book but rather connect to an electronic reader on our mobile device. With the advent of multimedia books in which print commands less of the content, schooling is being transformed. The formats through which we communicate and the corresponding popular vocabulary are also evolving. Just recently, my attention was attracted to a section of text when I saw the word “YouTube” when in fact “your tub” was written.

Dave Carpenter/CartoonStock

Communicating via written language, as with gestures, demands visual attention and active hands. Speech is more complementary in that it can narrate dialog when the hands and eyes are otherwise occupied in an unending competition to be the fittest to survive. Although written language has this disadvantage, it is nonetheless possible to communicate in this modality. E-mail and instant messaging most recently bear witness to its use. If the presentation of written language were adapted to the capabilities of the child, then reading might also be easily acquired early in life. For example, advances in technology have made readable displays easily available to toddlers, as witnessed by their apparent fascination with portable touch-sensitive visual displays. After just a few experiences with touch screens, infants quickly come to expect that other objects such as TVs or books will also react when touched.

Photographs courtesy of the author.

Notwithstanding the intuitive primacy of spoken language, I propose that once an appropriate form of written text is meaningfully associated with children’s experience early in life, reading will be learned inductively with ease and with no significant negative consequences. As described by John Shea in this magazine, “there are no known populations of Homo sapiens with biologically constrained capacities for behavioral variability” (March–April 2011). I envision a physical system, called Technology Assisted Reading Acquisition (TARA), to provide the opportunity to test this hypothesis. TARA exploits recent developments in behavioral and brain science and technology, which are rapidly evolving to make natural reading acquisition possible before formal schooling begins. In one instantiation (Figure 3), TARA would automatically recognize a caregiver’s speech and display a child-appropriate written transcription.

We can view speech and writing as two forms of language; sign language is a third. Notwithstanding our bias for spoken language because of our language acquisition experience, I propose that there is no reason to consider one of them as more fundamental than the others. A basic assumption behind my proposal is that there are analogous processes at work in perceiving speech and text. Luckily, our introspections and experience are not required to debate this issue. Empirical and theoretical research in several fields of behavioral science has set the stage for the present proposal.

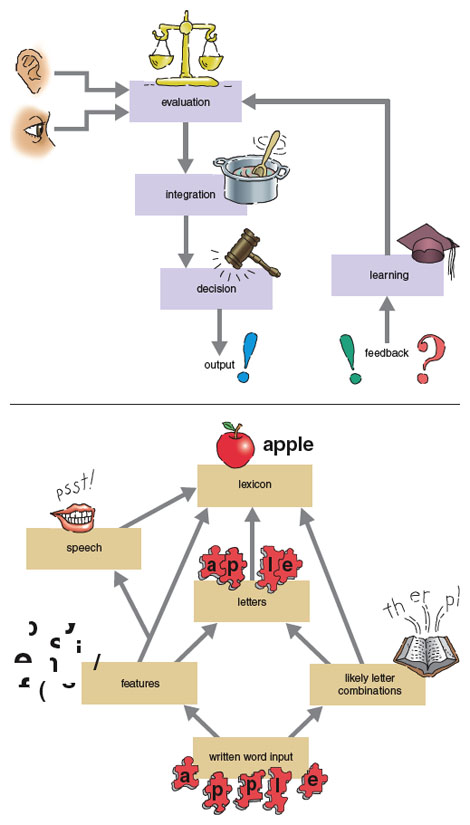

In an earlier publication in this magazine, David Stork of Ricoh Innovations and I discussed an example of pattern recognition in terms of our experience in attempting to identify an apple’s variety (May–June 1998). This identification is influenced by many characteristics, including shape, color, texture, smell and taste. Two principles emerged from research at that time: First, the brain automatically combines information from our different senses, and second, this integration process holds not only for apples and other objects but also for language understanding as in speech perception. This integration process is mathematically described by a Bayes Law, first proposed by a Scottish minister about two and a half centuries ago. We also showed that this law could be productively formulated as a psychological theory called the Fuzzy Logical Model of Perception (FLMP).

Illustration by Tom Dunne.

As illustrated in Figure 4, the FLMP views pattern recognition as involving three successive but overlapping processing stages. The initial evaluation stage assesses each possible source of evidence for the event being recognized. Evaluation determines how much each of the available sources (shape, color and so on) supports relevant alternatives; for example, is this apple a Granny Smith, Golden Delicious, McIntosh or Honeycrisp? The integration stage combines or integrates the various sources of support given by the evaluation stage. Finally, the decision stage, faced with the support for each of the possible alternatives, chooses the alternative with the most relative support.

At that time, we did not address our potentially controversial assumption: that object recognition and speech perception follow the same explanatory principles. Some scientists believe that speech perception and language understanding more generally follow specialized processes, and are not adequately described by a pattern recognition framework. Since that time, however, the assumption of specialized processes has not been necessary to account for language perception and understanding in many experiments. The fundamental principle now emerging is that many aspects of language processing involve a form of pattern recognition, influence by multiple sources of information and the outcomes to be quantitatively described by the FLMP. Pattern recognition involves an inferential process in which a perceiver uses current evidence to impute some interpretation that is most likely. Influence by multiple sources of information means that the current evidence can come from a variety of auditory, visual and gestural cues, as well as lexical, semantic, syntactic and pragmatic constraints.

The FLMP also assumes that these three successive but overlapping processes take place in reading. Feature evaluation analyzes the written input and provides the degree to which each feature of the letters and words match representations of letters and words in memory. Features are visual characteristics of the printed text that distinguish the different letters and words for the reader. Readers also use what they already know about what letters and letter patterns are likely to occur in a given context. For example, if feature evaluation narrows the first letter of a word to the letters “f” and “t” and the second letter as “n” and “h,” then the sequence “th” is most likely. As shown in Figure 4, this orthographic knowledge serves as an additional source of information in reading words. Experiments have shown that words with high orthographic structure are recognized more quickly than words low in orthographic structure.

In terms of the FLMP, we can expect multiple influences in word recognition as in other domains. Before you continue reading, try to think of a four-letter word that ends in the letters “e n y.” If you failed to find one, you might have adopted the following inner speech strategy. “Oh, the letters ‘e n y, eenee.’ I’ll just go through the alphabet: anee, beenee, ceenee, deenee, eenee, etc.” Reaching the letter “z, zeenee,” you conclude there is no word that meets this criterion. There is a word deny, however, but it is not pronounced deenee. This trick illustrates that we can’t help but sound out writing, and some have even proposed that we can only read by first mapping the written letters into a spoken counterpart and recognizing the word on this basis. Although this idea is certainly wrong, speech information can contribute to reading. Letter information from the word representation can excite phoneme information which also enters the word recognition process. Experiments have also shown that words with high spelling-to-sound fluency are recognized more quickly than words low in this variable.

These same processes appear common to many other languages, not just English. Brian Macwhinney from Carnegie-Mellon University, Elizabeth Bates (now deceased), their colleagues, and other scientists have demonstrated that the actual sources of information can differ dramatically in different languages, but the underlying processes appear to be the same. In sentence processing, for example, word order is more important than animacy of the constituents in English whereas the opposite holds in Italian. Given the sentence “The barn kicked the horse,” most English speakers interpret the barn as the agent/actor, whereas most Italian speakers claim the horse as the agent/actor.

The processes that have been uncovered also operate in language acquisition, not just in accomplished language users. Roberta Golinkoff from the University of Delaware, Kathy Hirsh-Pasek from Temple University and their colleagues find support for an emergentist coalition model that assumes children rely on multiple cues over development in the mapping of words onto referents. The use of and the weight given to these cues change across development. For example, infants initially rely mostly on perceptual cues and gradually begin to use a speaker’s intent and linguistic cues to determine word reference.

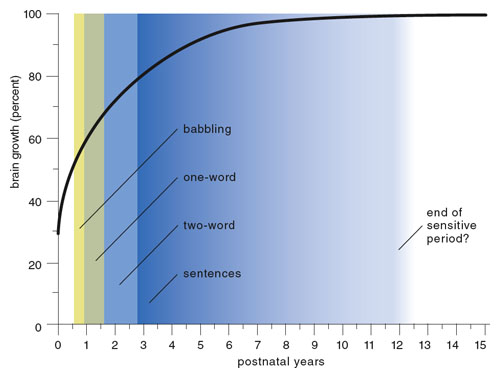

Speech is easily acquired but only if the child is immersed in spoken language early in life. We know this from the few sad cases of so-called feral children who are socially isolated and reach school age or adolescence without exposure to language. These distressing cases and other research from developmental, behavioral and brain sciences have documented so-called critical periods in audition, vision and language. These periods are crucial for development. In contrast to later in life, the brains of preschool children are especially plastic, or malleable. As shown in Figure 5, brain growth and language acquisition are highly correlated. Deprivation of sensory or linguistic input during this time can diminish neural cell growth, produce cell loss and reduce the number of dendritic connections among neural cells. This loss can result in a substantial deficit in the functions of sensory and language systems of the child. Using spoken language as a relevant comparison, it is possible that limited written input during this period can place the child at a disadvantage in learning to read when schooling begins.

Illustration adapted by Tom Dunne from Sakai 2005 with permission from AAAS.

There is less need today, relative to just a few years ago, to instruct an audience about the sophisticated abilities of infants from their birth through their first years of life. Andrew Meltzoff of the University of Washington was the first to show that infants can imitate facial movements, and there are now many delightful variations of infants’ imitative behaviors on the Internet. The learning of baby signs is also a form of imitation learning, and Linda Acredolo and Susan Goodwyn at the University of California, Davis, systematically documented the successful learning of baby signs in parallel with speech.

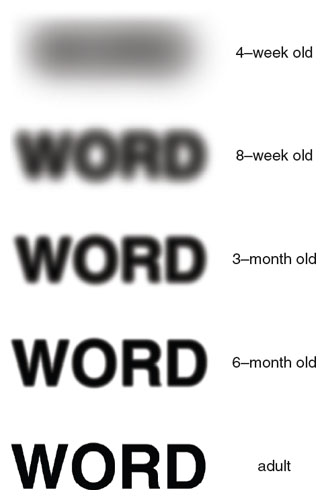

Illustration by Tom Dunne.

Well-documented research and measurement of infants’ vision development suggest that infants during the first year of life have the capacity to perceive written language. Some of the vision milestones for infants are the perception of color by one month, focusing ability at two months, eye coordination and tracking at three months, depth perception at four months, and object and face recognition at five months. Infants’ visual acuity also improves dramatically from birth onward, reaching close to adult levels by eight months of age. It appears that infants do have the motor and visual capabilities to acquire a visual language, and it is possible that they could acquire literacy naturally.

Infants are sophisticated information processors and quick learners. Early research with 2D line drawings of animals with different features found that 10- and even 7-month-old infants formed categories on the basis of correlations among the line-drawn features. More recently, typical experiments expose infants to a series of inputs with specific statistical constraints and measure whether infants learn and remember them. In a study using geometric elements, for example, 9-month-old infants were habituated to scenes with several geometrical objects with certain statistical constraints among the spatial location of the objects. Infants learned the statistical structure as documented by their attention and habituation behavior. After habituation, they attended more to scenes that maintained the statistical structure of the habituation scenes than to scenes that violated this structure.

Illustration by Tom Dunne.

Although infants have been touted as clever perceivers of their visual world, developmental psychologists have not tested their early reading abilities. Fortunately, Mark Changizi of 2AI Labs found that the physical properties of typical real-world objects and letters are topographically similar and are probably treated similarly by the visual system. It is only natural to think of hieroglyphics as being based on object properties, but this would not be necessarily true of letters. However, letter shapes evolved in part from hieroglyphic forms, so it is not surprising that even letters have properties of physical objects. This relationship is still apparent today: There are exercises and books aimed at teaching the alphabet by drawing children’s attention to similarities in shape of some object and a letter. The letter S looks like a snake, the letter H is part of a ladder, the letter O is an open mouth and so on.

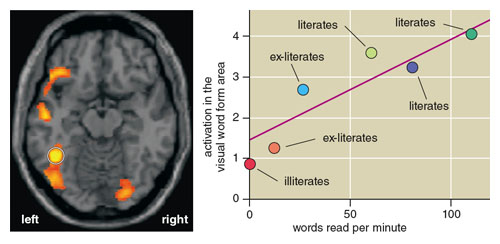

Images adapted by Tom Dunne from Dehaene and Cohen 2011. Reprinted with permission from Trends in Cognitive Sciences.

Since letters have the same optical and visual properties as everyday objects and because infants have performed so expertly with everyday objects, there is reason to believe they would behave similarly with letters. Thus, we can envision the discrimination and categorization of letters, words and sentences as a pattern recognition problem that is analogous to the recognition of speech, music, objects and other categories such as dinosaurs and cars. Supporting this conclusion, a specific region of the brain located in the left fusiform gyrus, is activated during reading as well as during object and face recognition.

Infants clearly have the capacity to perceive, process and learn semantic components in spoken language. Given the argument for infants being equipped for learning to read spontaneously, why haven’t they done so? Spoken language is present in a child’s environment continuously from birth and it is learned inductively. My answer must be that written language is not present often enough or saliently enough in the growing child’s world to allow inductive learning. Written language should also be acquired if, like speech, it is presented often enough and is perceptible in socially meaningful contexts. No child has yet had this opportunity, but current technology might enable this learning as easily in written language as it is in spoken language.

The challenge of applying TARA reawakens the controversial question of how children acquire language. Nativist and empiricist camps have fortified their beliefs around this question. Nativists believe that innate knowledge allows us to acquire the rules of language, which cannot be induced from normal language experience. Empiricists counter this claim by demonstrating how quickly infants learn arbitrary properties of their linguistic input and its context. They hold that infants’ language thus follows typical learning processes. Fortunately, we can conceptualize how language learning might take place without resolving this debate.

In 1960, philosopher and logician Willard Van Orman Quine illustrated the indeterminacy of translation using the example of a native who points at a white running rabbit and says “gavagai.” The linguist or anthropologist, not knowing the language, cannot determine whether the person is referring to the rabbit, the rabbit running, a white animal or a variety of other alternatives. A humorous anecdote showing another example of ambiguity of reference involves two children who decide it’s time to begin swearing. Johnny says to Jane, “I’ll say sh-t and you say a-s.” With this plan in hand, the kids go down to breakfast and Mom asks what they would like. Johnny answers, “Ah sh-t, give me some Cheerios.” Mom cracks him one upside the jaw, turns to Jane and angrily shouts, “And what do you want?” Jane looks over the situation and anxiously stutters, “I don’t know but you can bet your a-s it ain’t gonna be Cheerios.”

How might such ambiguities be resolved? Take the example of a toddler brother shaking a rattle in front of his little sister. His mother tells her, “Brother is shaking your rattle.” What is little sister to do? Both the continuous rattle-shaking and the stream of speech have to be (at least partially) recognized and associated with one another. Early speech research gave the promise that infants innately recognize the phonemes of the utterance, which would make the speech recognition part easier. This research did not hold up, but it did reveal how we often implicitly edit what we say to young children and how we say it in so-called infant-directed speech. Talkers emphasize words by speaking them in relative isolation from adjacent words, adjusting their voices to become higher pitched, using a wider pitch range, exaggerating the articulation of vowel sounds, exaggerating their emotional tone, speaking in simpler, shorter utterances, using greater repetition, and speaking more slowly. This type of linguistic input benefits language learning, which perhaps makes it less magical.

Now we’ve reached the stage where we must address how the ease of forming this association between the linguistic input and meaning depends on the modality of the linguistic input. To form an associative link, it is necessary to recognize each event and their co-occurrence. Recognizing an event requires some attention on the part of the perceiver, and it is difficult to recognize two events simultaneously. A good analogy is the huge cost of multitasking, such as texting or phoning while driving, because we must constantly switch between these two activities. Written language requires the child to pay consecutive attention to some meaningful event and to the written language describing that event. Like baby sign or American Sign Language, written language can still be learned because the caregiver–child interaction will necessarily involve consecutive attention to what is meaningful in the exchange and the written language. Caregivers will either attract the child’s attention to the sign, or simply sign in front of the object or event that the child is attending to. The caregiver illustrates how toast is buttered and then attracts the child’s attention to her depiction of the event in sign language. Learning to read naturally will require similar scenarios.

Understanding speech might have an advantage over reading. A spoken description occurring at the same time as a meaningful visual event might not require successive attention between them. However, learning their association might still require successively attending to the spoken description and the meaningful visual event. Like driving and talking, each behavior requires some attention, and a cost is paid if they are carried out at the same time. Given that most meaningful events extend over a significant time period (like shaking the rattle), the listener would usually have the opportunity for successive attention to the two inputs (the experienced event and the linguistic form). If this argument holds, written language may not be that different from spoken language.

Even if our premise is correct, technology has only recently become good enough to afford children the opportunity to learn to read naturally. TARA must capture the child’s experienced meaning and display it in written form. Not unlike the many philosophers who preceded us, we realize that the child’s meaning is not easily revealed. If a child is spoken to and she is listening, however, a good bet is that her meaning is related to what is being said. A possible way to apply TARA would be to recognize this speech and present it in written form. The presented text could be edited and embellished in a manner similar to infant-directed speech.

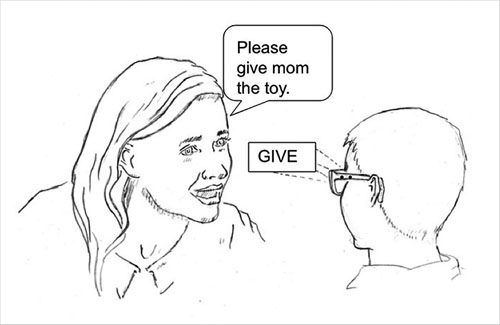

Successive words would be presented sequentially on a single line in a fixed window frame. The fixed window would allow the child to fixate on the words without requiring eye movements. Although this presentation method has not been validated with preschool children, school children can read this presentation mode just as efficiently and accurately as a typical document format. Figure 3 illustrates how TARA is used with a tablet computer, which can be held by the caregiver or placed inside a transparent holder sewn into a shirt that the caregiver would wear.

Another possibility is to have the child hold or wear the device that displays text, with of course the appropriate safety precautions. The written words might be presented on eyeglasses in the form of a head-up display (HUD). The HUD would augment the normal view of the world with written text superimposed on the perceived objects and events, as illustrated in Figure 9.

Ideally, TARA will use prior information about the individual caregiver, the child and the situation to determine what words are displayed, the visual properties of the letters that make up each word and the rate of presentation of successive displays containing the written output. Younger children will require larger letters, a slower pace of successive words and perhaps fewer words. For example, TARA recognizes the caregiver describing a red toy car to an eight-month-old reader. Using stored information about the reader, TARA determines that only the word “CAR” should be written on the display. If the child were 14 months old, on the other hand, the words “TOY CAR” would be displayed. A two-year-old child would see “RED TOY CAR.” Like current practice in reading instruction, the written language would be tailored to the child’s perceptual and cognitive capabilities.

That active learning by doing is more effective than passive learning is well known. Therefore, the child should be able to interact with a TARA display. The display could be touch sensitive to accept input from the child and respond appropriately. This would allow the child to touch the display to issue a command to re-display the text, to trace the letters or to draw them from scratch. The child or the caregiver might initiate other commands to call up other information, such as the corresponding spoken or sign language or even a translation into another language.

I envision that a successful implementation of TARA would provide two major benefits for creating competent readers. First, we have seen that acquiring literacy naturally would eliminate the need for reading instruction during the first years of schooling. This “decoding” instruction includes the guided instruction about letters and letter combinations, how they map into speech and the recognition of sight words and grammatical forms. In addition, there is evidence that children are also actively learning about the spelling patterns of their orthography and the spellings of specific words. English, for example, has many pairs of homophones in which the same spoken word is spelled in two different ways (for example, “see” and “sea”). Mastery of these skills, however, does not guarantee that the child is capable of reading for understanding. A constant concern in schools today is that many children can read fluently but do not fully comprehend what they are reading. One reason may be that the decoding process, although well-learned, still requires attention and effort that leaves fewer resources for processing the meaning of what is being read. Children taught by TARA would be better equipped to read for comprehension of what is being read. A child who masters the understanding of writing before starting school should not face this comprehension barrier. When the child acquires reading naturally at an early age, the meaning of what is being read is embodied in his or her experience, which precludes the current problem that traditionally taught students face.

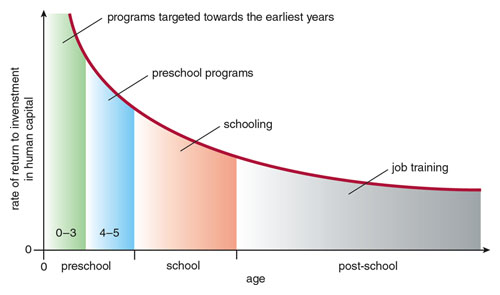

Adapted by Tom Dunne from Heckman and Masterov 2007.

If children are able to learn to read naturally, it can have a huge impact on society. This innovation would also help redirect financial resources where they will have the most impact. (The cost of reading instruction and reading remediation in the United States alone is estimated at around $670 billion per year.) Although 90 percent of public education spending is on children between the ages of 6 and 19, 90 percent of brain growth occurs before age 6. By directing public funding for literacy before age 6, resources can be focused where they will have the most impact, especially on those children with limited access to print and books. University of Chicago Nobel Prize winner James J. Heckman and his student Dimitriy V. Masterov calculated the return on investment in children of different ages. The return is gigantic for children before schooling begins and tapers off dramatically with increasing age.

TARA would also be of particular value for deaf and hard-of-hearing children, because it would provide an opportunity for them to learn written language in parallel with learning sign language, spoken language or both. Evidence from the deaf signing communities indicates that mastery of spoken language does not seem to be a necessary condition to learn to read. Reading skill in deaf readers, for example, is not predicted by phonological processing. In the oral deaf community, deaf children are sometimes bootstrapped into language via written language rather than spoken language. Helen Keller, deprived of hearing and sight by an illness at 19 months, was able to acquire written language delivered in Braille before she learned to perceive speech (by placing her fingers on the talker’s face). For hard-of-hearing and deaf children, written language might be the best entry into spoken language. If true, TARA would be particularly valuable for this population.

A recent report revealed an alarming increase in hearing loss in teens. The number with a slight hearing loss increased 30 percent in the past 15 years, whereas mild or worse hearing loss increased 77 percent. Although teens and parents are becoming more aware of the potential hazards of portable music players and loud concerts, hearing loss may indeed become more pervasive in society. Literacy does not cure hearing loss, but it could alleviate this potential problem in two ways. First, children reading at an earlier age would be more likely to read about this potential hazard, and second, some channels of communication might increasingly be written rather than spoken.

Illustration by Tom Dunne.

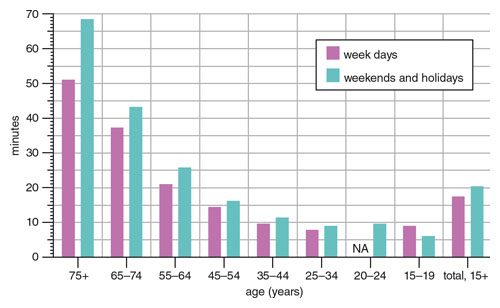

TARA might also lead to a dramatic growth in people’s reading. Figures on the average time spent reading in the United States in 2010 are surprisingly low. (These numbers would be somewhat larger if they included instant messaging, social network postings and e-mail.) It is difficult to predict, but if TARA is successful, it might make reading more enjoyable for its students than for children who receive direct instruction in school.

The past decade has seen a renewed concern with schools and their effectiveness. Schools are still primarily focused on the 3 Rs: reading, writing and arithmetic. If TARA is successful, we might speculate on how schools would change. Because it creates a more natural learning environment, TARA provides for preschoolers what mobile learning devices provide for schoolers. TARA would address the first two Rs. Early reading would open the child’s world to written numbers and math signs and might initiate earlier or at least stronger arithmetic learning.

Photograph courtesy of the author.

TARA, quite simply, represents a sea change in reading and literacy. It has the potential to vastly improve the literacy statistics that currently reflect and constrain nearly half of our population. It could save billions of dollars now spent on elementary reading programs and remedial instruction. And it is based on existing technology, normal human brain function and the developmental capabilities of typical youngsters.

I dedicate this paper to the memory of Richard L. Venezky, who devoted his productive career to unraveling the mysteries of the written word. I would like to thank my students and colleagues who have contributed to my work. Papers discussing my research can be found at http://mambo.ucsc.edu/people/dominic-massaro.html. Applications related to the ideas described in the paper can be found at http://psyentificmind.com/read-with-me-2

Click "American Scientist" to access home page

American Scientist Comments and Discussion

To discuss our articles or comment on them, please share them and tag American Scientist on social media platforms. Here are links to our profiles on Twitter, Facebook, and LinkedIn.

If we re-share your post, we will moderate comments/discussion following our comments policy.